|

Introduction

Data is the cornerstone of businesses from large enterprises to small D2C brands, and huge amounts of it can be collected from websites, mobile apps, chat messages, call centers, business transactions, surveys, and social media platforms, among other channels. All this data represents a gold mine of information that can offer customer insights and lead to new ideas for features or products. However, making sense of the data is easier said than done. The information originates from various channels and in multiple formats. It can be logged erroneously and contain other errors, including missing values. Because it comes from multiple domains, it can include unstructured data like text, images, audio, and video. That is why data preparation is essential. This involves cleaning, curating, transforming, and storing data sets for downstream applications including data analytics and data visualization, as well as predictive intelligence based on machine learning and deep learning models. Data can only provide value once it has been processed from its raw form, and effective data preparation can maximize that value. This article will explain the process of data preparation, especially in terms of data labeling, and will provide a checklist for data engineers to follow. What Is Data Preparation? Data preparation is not an entirely new process in technology companies. Data-driven operations previously focused on statistical analysis of business data from structured tables. The deep learning model has grown over the past decade along with the global penetration of mobile phones, widely available internet access, and cheaper cloud storage facilities. Today an estimated 2.5 quintillion bytes of data are being generated daily. Every user interaction with online companies is recorded, from someone clicking an ad or adding a product to a shopping cart to sharing a photo on a social media app. User-generated data is generally unstructured data: images, text, audio, or video. Such data can be used to train sophisticated deep learning models to predict what users want to type in a text, which branded products are featured in an image, and what kind of customer service will be provided in a phone conversation. For deep learning models to make sense of this data, all data samples need to be labeled. Data labeling tells the machine learning models what knowledge they need to acquire via supervised learning to power smart applications. This makes labeling critical in preparing data sets for training machine learning models. However, data labeling can also represent the chief source of errors, affecting potential improvement in model performance. Machine learning models can only be as accurate as the labeled data, which represents the models’ entire knowledge for the particular use case. For example, the source image data set in a face recognition program requires a label for every face shown in every image. During the labeling process for this data set, every image is reviewed by human subject matter experts, crowdsourced labelers on platforms like Amazon Mechanical Turk, or algorithms. Labeling helps clean and prepare the data set by removing noisy or unusable data. In this case, images that don’t contain any faces, or that show unreadable faces due to poor lighting or angles, should be removed because they won’t be helpful in training a face recognition model. This step also ensures the inclusion of images that are most helpful for the desired use case. Once the data set is reviewed and annotated, it can be used for all subsequent face recognition applications instead of going back to the raw data set. This saves time and effort for data engineers, as well as data scientists who might build novel models using the same data set. Additionally, multiple labels and metadata can be applied to each image during the labeling process so that they’re available for new use cases. A tag that identifies the face as that of a man, woman, or child can be used for different computer vision applications. This can potentially give the data set more flexibility for the future. The labeling can be built upon in subsequent versions of the data set. Once the face recognition model is live in production, new images can be labeled to help the model overcome data drift and augment its performance in the face of changing data distributions. This continued labeling and organizing keeps the models more robust and consistent. Data Preparation Steps There are certain best practices to follow when preparing data sets for deep learning applications. Following is a checklist for data engineers when working with unstructured data: (1) Check data formats Samples in a data set, especially if collected via web scraping or crowdsourcing, may come in multiple data formats. For example, an image could be a JPEG, PNG, or TIFF, while an audio file could be a WAV, MP3, or FLAC. Check whether the data set samples are in different formats, so that you can standardize the format across all samples. (2) Verify data types Certain deep learning applications are based on multimodal data including text, images, audio, video, and structured metadata. For example, a model that predicts what video a user might watch next is trained using multiple data types. It verifies the type of each data sample, then indexes and stores them separately. Note that an individual data type like numbers might also belong to different types like int, float, or string. (3) Verify data dimensions It’s crucial to check the dimensionality of the samples in a data set. For example, a set of images containing faces may be gathered from different cameras, each associated with different default image dimensions. (4) Identify what data needs to be labeled Once you’ve completed the above steps, you can begin data labeling. It may not be feasible in some situations to label each data sample, because manual labeling can be prohibitively expensive and time-consuming. In this case, choose an appropriate number of data samples for labeling. For common machine learning classification use cases, you need to sample data for labeling from each category. (5) Determine what type of labeling to perform The same data sample can be labeled in multiple ways depending on the use case. For instance, an image containing people and cars may be labeled for faces, for segmenting people or cars, or for the vehicle registration plates. (6) Decide who will label the data Data labeling can be performed manually by domain experts, crowdsourced from non-experts, or done programmatically using rule-based or model-based algorithms. Determine which annotators will define what kind of data, depending on their expertise or level of training. If a data set will be labeled using software, then the required configuration parameters, protocols, and performance metrics need to be established so that labeling is consistent. (7) Review data for errors and mistakes Usually, the first round of data labeling contains errors. To improve the data quality and eradicate errors, more experienced annotators should conduct a second or third level of review. Depending on cost, time, and available resources, each data sample can also be independently labeled by multiple annotators; the most commonly provided label can be assigned as the final label. (8) Split the data set into training and testing segments Once a data set is labeled, split it into separate train and test subsets for training and evaluating the model, respectively. Depending on the use case and the amount of available data, the ratio might be 80:20, 90:10, or even 99:1. To obtain more reliable results, k-fold cross-validation is recommended. Multiple training and test sets are sampled randomly, and the final results are averaged across all the different folds. Conclusion Without the protection of systematic data preparation and labeling checks, you may find that poor quality data damages the accuracy and performance of any analysis or models based on that data. If you follow the above guide, you will be able to ensure your data is good quality and labeled accurately. Related Blogs

Comments

Published by Andela Introduction

Data culture refers to an organizational culture of using data to derive insights and make informed business decisions. Companies can build a strong data culture by arming themselves with data and the right set of people, policies, and technologies. A data culture helps companies become more competitive and resourceful by leveraging data. And data-driven companies make better, faster, and more objective business decisions. They promote greater employee engagement and retention, and drive better financial outcomes in terms of revenue, profitability, and operational efficiency. In this article, you'll learn about data culture, what its importance is for modern organizations, and how you can build a strong data culture at your company. Why You Need a Strong Data Culture? Without a solid data culture, organizations will inevitably fail to harness the power of data. As previously stated, data culture refers to a set of beliefs and practices that companies use to cultivate and drive more data-driven decisions. Traditionally, businesses relied on the instinct and gut of a select few leaders to make strategic business decisions. However, with the accumulation and collection of massive volumes of customer and business data, domain expertise and instinct can now be complemented with data-driven insights to make more informed decisions. There are several advantages to building a strong data culture. Some of these include the following:

Every business sector, from product to finance to HR, creates and collects a lot of data from external customers or internal operations. For business heads and decision-makers, it's no longer feasible to stay on top of the ever-increasing volumes of data to better understand and evaluate the current state of their organization. However, with data analysts and scientists embedded across each department, it is possible to tap business insights in real time and respond quickly to changes in business performance. A strong data culture also promotes greater employee engagement and retention. When employees see that decisions are made on the basis of data and not driven just by the highest-paid executives, they feel that they can contribute more insights to influence decision-making. In the long term, this facilitates attracting the best talent in the market who can be incentivized to have a greater say in making key business decisions using data. Moreover, there are also strong financial outcomes associated with building and promoting a data culture. Companies with data-driven cultures benefit from increased revenue, better customer services, and more operational efficiencies leading to improved profitability. How to Build a Strong Data Culture? Building a strong data culture is a long-term endeavor that requires patient support and encouragement from leadership. Companies with strong data-driven cultures have executives who lead by example and establish clear expectations that decisions will be objective and based on data. Data leaders can lead from the front by establishing clear goals and guidelines, investing in technology and training, as well as identifying and rewarding employee behaviors that embody a data-led culture. Beyond leadership setting a tone for the whole organization, let's take a look at a few other components that can help build a strong data culture. 1 Bring Business and Data Science Together One of the first steps in building a data culture is to build a strong data science team consisting of data analysts, data engineers, and data scientists. Having quality in-house data talent is a competitive advantage that reaps multiple benefits, including building a robust culture focused on data. Once a data science team is up and running, it needs to be strategically embedded across various departments of the business. This helps business professionals interact with data professionals more regularly and better understand how the power of data analytics and data science can improve business efficiencies and impact profitability and growth. At the same time, this setting enables data professionals to better understand how the business works and build intuition for developing better data and machine learning–powered tools and products. This creates a positive flywheel where both business and data science teams learn to collaborate better and benefit from their respective skill sets. By bringing business and data science together, everyone in the organization learns to appreciate the value of data and use data-driven insights to improve the quality of their decisions, products, and services. 2 Leverage Data When Creating Goals and Deadlines Driving strategic business goals and metrics by leveraging data is a key aspect of encouraging a data-led culture. When goal-setting exercises are conducted objectively and leaders regularly use data and metrics from previous business quarters or external data about competitors or the overall market, everyone in the organization will start to embrace similar data-driven approaches. Leveraging data for setting new targets also enables every stakeholder in the organization to understand and anticipate their future goals and prioritize their work accordingly. Data-led goal setting is a more democratic and fair-minded process that encourages ownership of respective goals by every employee, as opposed to arbitrary, instinct-led, unilateral decisions made by the leadership. 3 Ensure Everybody Has Access to Data A fundamental step toward attaining a data culture is to democratize access to data across the organization. Data culture is a difficult goal when employees in different parts of a business struggle to obtain data. If you don't give your employees access to your data, they won't be able to utilize it when making decisions. This disenfranchises the data analysts, engineers, and scientists disproportionately, as their day-to-day work is impacted the most. Without a motivated team of data professionals, the downstream benefits of data are unlikely to materialize across various business departments. A strong foundation of data governance and data democratization is a prerequisite to achieving the business goals associated with a robust data culture. 4 Keep Your Data Technology Up-to-Date A critical aspect of building a data culture is employing modern tools and technologies to make it easier for employees to access, analyze, and share data-driven insights. Building a modern data stack with newer components like a metrics layer simplifies data-based operations and analytics for everyone, especially nontechnical business stakeholders. Technology, like data warehouses and metrics layers; data analytics tools, like Tableau or Power BI; and customer relationship management (CRM) tools, like Salesforce, are indispensable for modern businesses. Building the data architecture in a cloud environment like Amazon Web Services further improves access to data and reduces the need for multiple tools with a steep learning curve. The right use of tools for data, collaboration, and customer service goes a long way in fostering the use of technology to drive a strong data-led culture. 5 Provide Training for Employees Having supportive leadership and access to data and technology is of little use if employees are not data literate and able to extract insights from data. This requires further investment in terms of learning and development to empower employees with the necessary skills to explore, understand, and share data-driven insights across the organization. In addition to reducing the skills gap, it also encourages people from nontechnical backgrounds to become more data savvy, collaborate better with data experts, and build more comprehensive data products and solutions to benefit the business. 6 Reward Data-Oriented Decisions and Behavior The primary challenge to becoming a data-driven organization is not technical but cultural. A strong data culture is based on a robust foundation of people, policies, and technology. However, once the initial foundation is in place, data leaders need to maintain and bolster the spirit of data-driven decision-making by incentivizing and rewarding behaviors that embody the culture. At the same time, decisions and behaviors that do not represent a holistic data-led process ought to be called out and questioned until every single employee is on board with the philosophy of using data for every decision. This includes encouraging experimentation to answer key business questions for which data does not exist yet or when the current set of data does not yield compelling evidence. Conclusion In this article, you learned about the importance of a data culture for businesses. It's a formidable task to build a strong data culture and is a top priority for a majority of CEOs. Data-driven companies are in a better position to attract and retain talent, make faster decisions with more conviction, and drive stronger growth and profitability to meet their business goals. According to research by McKinsey & Company, data-driven companies are able to achieve their goals faster and realize at least 20 percent more earnings. Related Blogs

Web3 is the third generation of the internet based on emerging technologies like blockchains, tokens, DAOs, digital assets, decentralised finance that has the potential to give back control of digital assets back to the users with greater trust and transparency.

Typical web3 applications focus on DAOs, DeFi, Stablecoins, Privacy and digital infrastructure, the creator economy amongst others. The web3 ecosystem represents a promising green space for creators, developers, and various types of tech and non-tech professionals as well. In my talk (video and slides shared above) for Crater's Encrypt 2022 hackathon, I describe how AI can be leveraged to build commercially viable web3 applications for India. I cover a number of relevant AI/ML datasets, models, resources and applications for these domains, recognized by the Ministry of Electronics and Information Technology's National Strategy on Blockchain:

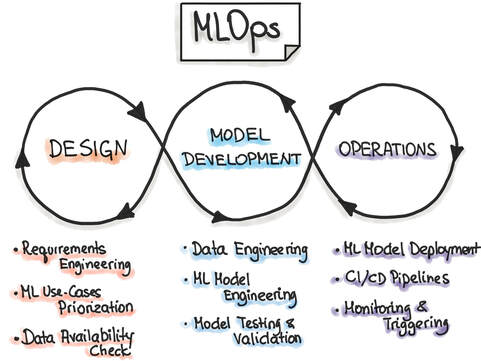

Related Blogs Machine learning operations (MLOps) refer to the emerging field of delivering machine learning models through repeatable and efficient workflows. The machine learning lifecycle is composed of various elements, as shown in the figure below. Similar to the practice of DevOps for managing the software development lifecycle, MLOps enables organizations to smooth the path to successful AI transformation by providing an engineering and technological backbone to underlying machine learning processes.

MLOps is a relatively new field, as the commercial use of AI at scale is itself a fairly new practice. MLOps is modeled on the existing field of DevOps, but in addition to code, it incorporates additional components, such as data, algorithms, and models. It includes various capabilities that allow the modern machine learning team, comprising data scientists, machine learning engineers, and software engineers, to organize the building blocks of machine learning systems and take models to production in an efficient, reliable, and reproducible fashion. MLOps tools MLOps is carried out using a diverse set of tools, each catering to a distinct component of the machine learning pipeline. Each tool under the MLOps umbrella is focused on automation and enabling repeatable workflows at scale. As the field of machine learning has evolved over the last decade, organizations are increasingly looking for tools and technologies that can help extract the maximum return from their investment in AI. In addition to cloud providers, like AWS, Azure, and GCP, there are a plethora of start-ups that focus on accommodating varied MLOps use cases. In this article, I will cover tools for the following MLOps categories:

In the following section, I will list a selection of MLOps tools from the above categories. It is important to note that although a particular tool might be listed under a specific category, the majority of these tools have evolved from their initial use case into a platform for providing multiple MLOps solutions across the entire ML lifecycle. Metadata Management Building machine learning models involves many parameters associated with code, data, metrics, model hyperparameters, A/B testing, and model artifacts, among others. Reproducing the entire ML workflow requires careful storage and management of the above metadata. Featureform Featureform is a virtual feature store. It can integrate with various data platforms, and it enables the management and governance of the data from which features are built. With a unique, feature-first approach, Featureform has built a product called Embeddinghub, which is a vector database for machine learning embeddings. Embeddings are high-dimensional representations of different kinds of data and their interrelationships, such as user or text embeddings, that quantify the semantic similarity between items. MLflow MLflow is an open-source platform for the machine learning lifecycle that covers experimentation and deployment, and it also includes a central model registry. It has four principal components: Tracking, Projects, Models, and Model Registry. In terms of metadata management, the MLflow Tracking API is used for logging parameters, code, metrics, and model artifacts. Versioning For machine learning systems, versioning is a critical feature. As the pipeline consists of various data sets, labels, experiments, models, and hyperparameters, it is necessary to version control each of these parameters for greater accessibility, reproducibility, and collaboration across teams. Pachyderm Pachyderm provides a data layer for the machine learning lifecycle. It offers a suite of services for data versioning that are organized by data repository, commit, branch, file, and provenance. Data provenance captures the unique relationships between the various artifacts, like commits, branches, and repositories. DVC DVC, or Data Version Control, is an open-source version control system for machine learning projects. It includes version control for machine learning data sets, models, and any intermediate files. It also provides code and data provenance to allow for end-to-end tracking of the evolution of each machine learning model, which promotes better reproducibility and usage during the experimentation phase. Experiment Tracking A typical machine learning system may only be deployed after hundreds of experiments. To optimize the model performance, data scientists perform numerous experiments to identify the most appropriate set of data and model parameters for the success criteria. Managing these experiments is paramount for staying on top of the data science modeling efforts of individual practitioners, as well as the entire data science team. Comet Comet is a machine learning platform for managing and optimizing the entire machine learning lifecycle, from experiment tracking to model monitoring. Comet streamlines the experimentation workflow for data scientists and enables clear tracking and visualization of the results of each experiment. It also allows side-by-side comparisons of experiments so users can easily see how model performance is affected. Weights & Biases Weights & Biases is another popular machine learning platform that provides a host of services, including [experiment tracking](https://wandb.ai/site/experiment-tracking). It facilitates tracking and visualization of every experiment, allows rerunning previous model checkpoints, and can monitor CPU and GPU usage in real time. Model Deployment Once a machine learning model is built and tests have found it to be robust and accurate enough to go to production, the model is deployed. This is an extremely important aspect of the machine learning lifecycle, and if not managed well, it can lead to errors and poor performance in production. AI models are increasingly being deployed across a range of platforms, from on-premises servers to the cloud to edge devices. Balancing the trade-offs for each kind of deployment and scaling the service up or down during critical periods are very difficult tasks to achieve manually. A number of platforms provide model deployment capabilities that automate the entire process of taking a model to production. Seldon Seldon is a model deployment software that helps enterprises manage, serve, and scale machine learning models in any language or framework on Kubernetes. It’s focused on expediting the process to take a model from proof of concept to production, and it’s compatible with a variety of cloud providers. Kubeflow Kubeflow is an open-source system for productionizing models on the Kubernetes platform. It simplifies machine learning workflows on Kubernetes and provides greater portability and scalability. It can run on any hardware and infrastructure on which Kubernetes is running, and it is a very popular choice for machine learning engineers when deploying models. Monitoring Once a model is in production, it is essential to monitor its performance and log any errors or issues that may have caused the model to break in production. Monitoring solutions enable setting thresholds as indicators for robust model performance and are critical in solving for known issues, like data drift. These tools can also monitor the model predictions for bias and explainability. Fiddler Fiddler is a machine learning model performance monitoring software. To ensure expected model performance, it monitors data drift, data integrity, and anomalies in the data. Additionally, it provides model explainability solutions that help identify, troubleshoot, and understand underlying problems and causes of poor performance. Evidently Evidently is an open-source machine learning model monitoring solution. It measures model health, data drift, target drift, data integrity, and feature correlations to provide a holistic view of model performance. Conclusion MLOps is a growing field that focuses on organizing and accelerating the entire machine learning lifecycle through best practices, tools, and frameworks borrowed from the DevOps philosophy of software development lifecycle management. With machine learning, the need for tooling is much greater, as machine learning is built on foundational blocks of data and models, as well as code. To bring reliability, maturity, and scale to machine learning processes, a diverse set of MLOps tools are being increasingly used. These tools are developed for optimizing the nuts and bolts of machine learning operations, including metadata management, versioning, model building and experiment tracking, model deployment, and monitoring in production. Over the past decade, the field of AI and machine learning has grown rapidly, with organizations embracing AI and recognizing its critical importance for transforming their business. The field of MLOps is still young, but the creation and adoption of tools will further empower organizations in their journey of AI transformation and value creation. Related Blogs Published by CloudForecast Introduction

Amazon Redshift is a widely used cloud data warehouse that is used by many businesses, like Nasdaq, GE, and Zynga, to process analytical queries and analyze exabytes of data across databases, data lakes, data warehouses, and third-party data sets. There are multiple use cases for Redshift, including enhancing business intelligence capabilities, increasing developer and analyst productivity, and building machine learning models for predictive insights, like demand forecasting. Amazon Redshift can be leveraged by modern data-driven organizations to vastly improve their data warehousing and analytics capabilities. However, the pricing for Redshift services can be challenging to understand, with multiple criteria that define the total cost. In this article, you’ll learn about Amazon Redshift and its pricing structure, with suggestions for how to optimize costs. What Is Amazon Redshift? Essentially, Amazon Redshift provides analytics over multiple databases and offers high scalability in a secure and compliant fashion. Additionally, there is a serverless option called Amazon Redshift Serverless that makes it even easier to rapidly scale analytics setup without requiring a managed data warehouse infrastructure. It helps with data democratization and assists various data stakeholders to extract data insights by simply loading and querying data in the warehouse. Amazon Redshift Pricing In this section, you’ll learn about Amazon Redshift’s capabilities as it pertains to usage and pricing. Free Tier For new enterprise users, the AWS Free Tier provides a free two-month trial of the DC2.Large node. This free service includes 750 hours per month, which is sufficient to run a single DC2.Large node with 160GB of compressed solid-state drives (SSD). On-Demand Pricing When you launch an Amazon Redshift cluster, you select a number of nodes in a specific region as well as their instance type to run your data warehouse. In on-demand pricing, a simple hourly rate applies based on the previous configuration and is billed as long as the cluster is live. The typical hourly rate for a DC2.Large node is $0.25 USD per hour. Redshift Serverless Pricing With Amazon Redshift Serverless, costs accrue only when the data warehouse is active and is measured in units of Redshift Processing Units (RPUs). You’re charged in terms of RPU-hours on a per-second basis. The serverless configuration also includes concurrency scaling and Amazon Redshift Spectrum, and the cost for these services is already included. Managed Storage Pricing Amazon Redshift charges for the data stored in a managed storage at a specific rate per GB-month. Its usage is calculated on an hourly basis as a function of the total amount of data and starts as low as $0.024 USD per GB with the RA3 node. The cost of a managed storage also varies according to the particular AWS region in which the data is stored. For example, consider the cost of a managed storage pricing where 100TB of data is stored with an RA3 node type for thirty days in the US East region, where the cost is $0.024 USD per GB-month. The total usage for thirty days in GB-hours is as follows: 100TB × 1024GB/TB (converting TB to GB) × 30 days × 24 hours/day = 73,728,000 GB-hours Then you can convert GB-hours to GB-months: 73,728,000 GB-hours / (24 × 30) hours per month = 102,400 GB-months Finally, you can calculate the total cost of 102,400 GB-months at $0.024 USD/GB-month in the US East region: 102,400 GB-months × $0.024 USD = $2,457.60 USD Spectrum Pricing With Amazon Redshift Spectrum, users can run SQL queries directly on the data in the S3 buckets. Here, the cost is based on the number of bytes scanned by the Spectrum utility. The pricing of Redshift Spectrum is $5 USD per terabyte of data scanned. Concurrency Scaling Pricing With Concurrency Scaling, Amazon Redshift can be scaled to multiple concurrent users and queries. For every twenty-four hours that your main cluster is live, you accrue a one-hour credit. Any additional usage is charged on a per-second, on-demand rate that depends on the number of types of nodes in the main cluster. Reserved Instance Pricing Reserved instances are designated for stable production workloads and are less expensive than clusters run on an on-demand basis. Significant cost savings can be achieved through long-term usage and commitment to Amazon Redshift in the span of a few years. Pricing for reserved instances can either be paid all up front, partially up front, or monthly over the course of a year with no up-front charges. Amazon Redshift Cost Optimization Considerations Before you begin using Amazon Redshift, you need to be aware of your current costs. AWS Cost ExplorerThe AWS Pricing Calculator provides a configurable tool to estimate the cost of using Amazon Redshift. For instance, the annual cost of one node of the DC2.8xlarge instance in the US East (Ohio) region on an on-demand basis is as follows: 1 instance × $4.80 USD hourly × 730 hours in a month × 12 months = $42,048 USD The cost for the same Amazon Redshift configuration for a reserved instance for a one-year term paid up front is $27,640 USD. AWS Tags Using AWS cost allocation tags can help you decode and manage your AWS costs. Tagsenable AWS resources to be labeled in the form of key-value pairs and can include various types, like technical, business, security, and automation. Once the tags are activated in the Billing and Cost Management console, a cost allocation report can be generated based on the specific resources tagged. Tags can be user-defined or AWS-generated. Amazon Redshift Cost Optimization Optimizing Amazon Redshift costs comes down to effective planning, prudent usage and allocation of resources, and regular monitoring of the usage and associated costs. Optimizing Queries The analytical queries made on the data stored in Amazon Redshift can be optimized to run more efficiently. Queries can be compute-intensive, can be storage-intensive, or can take a long time to execute. There are a number of query tuning techniques that can be used to optimize your queries. Tables with skewed data or missing statistics, and queries with nested loops and long wait times, typically affect query performance and can be improved as illustrated in this AWS developer guide. Here is a commonly used weak query that selects all the columns in a table: SELECT * FROM USERS The previous query can be very inefficient and slow if the table consists of thousands of columns, especially if only a few columns are relevant for the necessary analysis. This query can be optimized by specifying and retrieving the exact column names like the following: SELECT Firstname, Lastname, DOB FROM USERS Cluster Limits and Quotas Usage limits on Amazon Redshift clusters can be programmed using the AWS Command Line Interface (CLI) tool. Limits can be imposed on concurrency scaling in terms of time and spectrum in terms of data scanned. Daily, weekly, or monthly periods can be used. A number of limits and quotas are defined for Redshift resources that can also be applied to constrain the overall costs associated with Redshift. Data Type Amazon Redshift costs can also be managed by storing data in a compressed, partitioned, and columnar data format, like Apache Parquet, since fewer data is scanned. Conclusion Amazon Redshift is a powerful and cost-effective cloud-native data warehouse that provides scalable and performant data analytics and processing capabilities. It also comes with a serverless configuration that allows any data stakeholder to run data queries without the need to provision and manage the data warehouse infrastructure. Amazon Redshift has multiple aspects affecting its pricing, including on-demand or reserved capabilities, serverless, managed storage pricing, Redshift Spectrum pricing, concurrency scaling pricing, and reserved instance pricing. Keeping on top of the various Amazon Redshift costs is not straightforward but can be made easier by AWS cost monitoring tools, like CloudForecast. CloudForecast helps manage AWS costs through daily cost management reports, monthly financial reports, untagged AWS resources discovery, and idle and underutilized resources visibility for cost-saving opportunities. Related blog Strong engineering talent is the bedrock of modern technology companies. Software engineers, in particular, are in high demand given their expertise and skills. At the same time, there is a much greater supply of software companies and startups, all of which are jostling to hire top engineers. Given this market reality, retention of top engineering talent is imperative for a company to grow and innovate in the short as well as the long term.

Retaining employees is critical for numerous reasons. It helps a company retain experience not only in terms of employees’ domain expertise and skills, but also organizational knowledge of products, processes, people, and culture. Strong employee retention rates (>90%) ensure a long-term foundation for success and enhances team morale as well as trust in the company. A stable engineering team is in a better position to both build and ship innovative products and establish a reputation in the market that helps attract top-quality talent. The corporate incentive of maintaining high standards of employee hiring and retention is also related to the costs of employee churn. Turnover costs companies in the US $1 trillion USD a year with an annual turnover rate of more than twenty-six percent. The cost of replacing talent is often as high as two times their annual salary. This is a tremendous expense that can be averted through better company policies and culture. The onus is typically on the human resources (HR) team to develop more employee-friendly practices and promote higher engagement and work–life balance. However, in practice, most HR teams are deferential to the company leadership and that is where the buck stops. Leaders and managers have a fundamental responsibility to retain the employees on their team, as more often than not, employees do not leave the company per se, but the line manager. I will discuss best practices and strategies to improve retention, which ought to be a consistent effort across the entire employee lifecycle--from recruiting to onboarding through regular milestones during an employee’s tenure. Start at the Start More often than not, managers do not invest in onboarding preparation and processes out of laziness and indifference. Good employee retention practice starts at the very beginning, i.e., at the time of hiring. Hiring talent through a structured, transparent, fair, and meritocratic interviewing process that allows the candidate to understand their particular role and responsibilities, the company’s diversity and inclusion practices, and the larger mission of the company sets an important tone for future employees. Hiring the right people who are a good culture fit increases the likelihood of greater engagement and longer tenure at the company. Hiring managers should not hire for the sake of hiring. They should put considerable thought into each new hire and how that hire might fit in on their team. Apart from hiring, managers have other important considerations, including:

In the first few months, the new hires, the hiring team, and company are in a “dating” phase, evaluating each other and gathering evidence on whether to commit to a longer-term relationship. Most new employees make up their mind to stay or leave within the first six months. A third of new hires who quit said they had barely any onboarding or none at all. The importance of a new employee’s first impressions on the joining date, the first week, the first month, and the first quarter cannot be overemphasized. Great onboarding starts before the new hire’s join date, ensuring all necessary preparation is handled, like paperwork. Orientation programs on the join day are essential to introduce the company and expand on its mission, values, and culture beyond what the employee might have learned during the interviews. Minor things like having the team know in advance about a new team member’s join date, and readying the desk, equipment, access, and logins are tell-tale signs of how much thought and effort the hiring team has invested in onboarding. Fellow teammates also make a significant impact, whether they are welcoming and drop in to say “hi” or stop by for a quick chat to get to know the hire better, or take the new employee out for lunch with the whole team. Onboarding should not end on day one but continue in various forms. Some examples include:

A successful onboarding strategy should enable the employee to know their first project, the expectations, associated milestones, and how performance evaluation works. Keep It Up! Onboarding should be followed up with regular check-ins by the manager and HR at the one-month, three-month, and six-month mark. These meetings should be treated as an opportunity for the company to assess the new employee’s comfort level on the team and provide feedback as needed. An onboarding mentor or buddy, if not assigned already, should be provided to help the employee find their feet and learn the informal culture and practices. The manager should set up the employee for success by providing low-hanging projects that are quick to deliver and help the new hire understand the process of building and deploying a new feature using the company’s internal engineering tools and systems. With quick wins, new hires are able to build trust within the organization and gain more confidence to do excellent work. As time goes on, the role of the hiring manager becomes more prominent in coordinating regular 1-on-1 meetings, providing the new hire clear work guidelines, as well as challenging and stimulating projects. Apart from work, an introduction to the organizational setup and culture, as well as social interaction within and beyond the team is also crucial. As the new employee ramps up, it is important to give constructive feedback so that the employee can improve. Where a new employee delivers positive impact in the early days itself, the manager should highlight their work within the team and organization, and motivate the employee to continue to perform well. In addition to core engineering work, employees feel more connected when a company actively invests in their learning and development. Cross-functional training programs that involve employees across different teams foster deeper collaboration and a stronger sense of connection within the various parts of the company. Investment in employees’ upskilling and education via partnership with external learning platforms or vendors also generates a positive culture of instilling curiosity and learning. Learning new skills energizes the employees and provides them opportunities to grow and develop. They can then apply the newly learned knowledge and skills to pertinent business problems. It creates a virtuous culture that yields overall positive outcomes for the employee and employer alike, and positively influences the long-term retention rates. New employees generally feel the need to be positively engaged. A powerful mission statement can sometimes convert naysayers faster and generate a company-wide sense of being part of something impactful. This fosters deeper engagement, loyalty, and trust in the company and helps employees embrace company values, resulting in better employee retention rates. Frequent town hall meetings from the leadership enable a new hire to understand the organization as a coherent whole and their particular role in furthering the company’s mission. Listen to Feedback The diverse organizational efforts to onboard, engage, and enhance new employees’ perception of the company are bound to fail if the organization does not seek and act on any feedback shared by the new hires. Companies ought to create an internal culture of open communication whereby they seek feedback from employees via surveys, meetings, and town halls, and showcase transparent efforts in implementing employees’ suggestions and feedback. Regular 1-on-1 meetings with managers should be treated as an opportunity to gather feedback and offer the employee insights into whether and how the company is taking action on that feedback. However, in spite of organizational efforts to improve employee satisfaction and wellbeing, some attrition is inevitable. Attrition rates of more than ten percent is a cause for concern, however, especially when top-performing employees leave the company. Exit interviews are typically conducted by HR and hiring managers, but in practice these are largely farcical as the employees hardly share their honest opinions and have lost trust that the company can take care of their career interests and development. Companies can implement processes that bring greater transparency around employee decisions related to hiring, promotion, and exit. These processes will also hold HR and managers to greater accountability with respect to employee churn, and incentivize them to increase the retention rates in their teams. In past generations, job stability was a paramount aspiration for employees which meant they typically spent all their working lives at the same company. In today’s world, with a plethora of enterprises and new startups, high-performing talent is in greater demand and it is possible to accelerate one’s career growth by frequently job hopping and switching companies. Nowadays, feedback about company processes, culture, compensation, interviews, and so on, is available on a plethora of public platforms including Glassdoor and LinkedIn. Companies are now more proactive in managing their online reputation and act on feedback from the anonymous reviews on such platforms. Conclusion Employees in the post-Covid remote-working world are prone to greater degrees of stress, mental health issues, and burnout, all of which have adverse impacts on their work–life balance. In such extraordinary times, companies face the unique challenge—and opportunity—to develop and promote better employee welfare practices. At one end of the spectrum, there are companies like Amazon. In 2015, The New York Times famously portrayed the company as a “bruising workplace.” Then, in 2021, The New York Times again reported on Amazon for poor workplace practices and systems, prompting a public acknowledgment from the CEO that Amazon needs to do a better job. On the other end of the spectrum, there are companies like Atlassian or Spotify that have made proactive changes in their organizational culture and are being lauded for new practices to promote employee welfare during the pandemic. Companies that adapt to the changing times and demonstrate that they genuinely care for their employees will enjoy better retention rates, lower costs due to frequent rehiring, and long-term employee trust that conveys the company as a beacon of progressive workplace culture and employment practices. Related Blogs

Data science teams are an integral part of early-stage or growth-stage start-ups as midlevel and enterprise companies. A data science team can include a wide range of roles that take care of the end-to-end machine learning lifecycle from project conceptualization to execution, delivery, and monitoring:

The manager of a data science team in an enterprise organization has multiple responsibilities, including the following:

As the data science manager, it’s critical to have a structured, efficient hiring process, especially in a highly competitive job market where the demand outstrips the supply of data science and machine learning talent. A transparent, thoughtful, and open hiring process sends a strong signal to prospective candidates about the intent and culture of both the data science team and the company, and can make your company a stronger choice when the candidates are selecting an offer. In this blog, you’ll learn about key aspects of the process of hiring a top-class data science team. You’ll dive into the process of recruitment, interviewing, and evaluating candidates to learn how to find the ones who can help your business improve its data science capabilities. Benefits of an Efficient Hiring Process Recent events have accelerated organizations’ focus on digital and AI transformation, resulting in a very tight labor market when you’re looking for data sciencedigital skills, like machinelike data science and machine learning, statistics, and programming. A structured, efficient hiring process enables teams to move faster, make better decisions, and ensure a good experience for the candidates. Even if candidates don’t get an offer, a positive experience interacting with the data science and the recruitment teams makes them more likely to share good feedback on platforms like Glassdoor, which might encourage others to interview at the company. Hiring Data Science Teams A good hiring process is a multistep process, and in this section, you’ll look at every step of the process in detail. Building a Funnel for Talent Depending on the size of the data science team, the hiring manager may have to assume the responsibility of reaching out to candidates and building a pipeline of talent. In larger organizations, managers can work with in-house recruiters or even third-party recruitment agencies to source talent. It’s important for the data science managers to clearly convey the requirements for the recruited candidates, such as the number of candidates desired and the profiles of those candidates. Candidate profiles might include things like previous experience, education or certifications, skill set or tech stack, and experience with specific use cases. Using these details, recruiters can then start their marketing, advertising, and outreach campaigns on platforms, like LinkedIn, Glassdoor, Twitter, HackerRank, and LeetCode. In several cases, recruiters may identify candidates who are a strong fit but who may not be on the job market or are not actively looking for new roles. A database of all such candidates ought to be maintained so that recruiters can proactively reach out to them at a more suitable time and reengage the candidates. Another trusted source of identifying good candidates is through employee referrals. An in-house employee referral program that incentivizes current employees to refer candidates from their network is often an effective way to attract the specific types of talent you’re looking for. The data science leader should also publicize their team’s work through channels, like conferences or workshops, company blogs, podcasts, media, and social media. By investing dedicated time and energy in building up the profile of the data science team, it’s more likely that candidates will reach out to your company seeking data science opportunities. When looking for a diverse set of talent, the search an be difficult as data science is a male dominated field. As a result, traditional recruiting paths will continue to reflect this bias. Reaching out and building relationships with groups such as Women in Data Science, can help broad the pipeline of talent you attract. Defining Roles and Responsibilities Good candidates are more likely to apply for roles that have a clear job description, including a list of potential data science use cases, a list of required skills and tech stack, and a summary of the day-to-day work, as well as insights into the interviewing process and time lines. Crafting specific, accurate job descriptions is a critical—if often overlooked—aspect of attracting candidates. The more information and clarity you provide up front, the more likely it is that candidates have sufficient information to decide if it’s a suitable role for them and if they should go ahead with the application or not. If you’re struggling with creating this, you can start with an existing job description template and then customize it in accordance with the needs of the team and company. It's also critical to not over populate a job description with every possible skill or experience you hope a candidate brings. That will narrow your potential applicant pool. Instead focus on those skills and experiences that are absolutely critical. The right candidate will be able to pick up other skills on the job. It can be useful for the job description to include links to any recent publications, blogs, or interviews by members of the data science team. These links provide additional details about the type of work your team does and also offer candidates a glimpse of other team members. Here are some job description templates for the different roles in a data science team: Interviewing process When compared to software engineering interviews, the interview process for data science roles is still very unstructured, and data science candidates are often uncertain about what the interview process involves. The professional position of data scientist has only existed for a little over a decade, and in that time, the role has evolved and transformed, resulting in even newer, more specialized roles, such as data engineer, machine learning engineer, applied scientist, research scientist, and product data scientist. Because of the diversity of roles that could be considered data science, it’s important for a data science manager to customize the interviewing process depending on the specific profile they’re seeking. Data scientists need to have expertise in multiple domains, and one or more second-round interviews can be tailored around these core skills:

Given how tight the job market is for data science talent, it’s important to not over complicate the process. The more steps in the process, the longer it will take and the higher the likelihood you will lose viable candidates to other offers. So be thoughtful in your approach and evaluate it periodically to align with the market. Types of Data Science Interviews Interviews are often a multistep process and can involve multiple steps of assessments. Screening Interviews To save time, one or more screening rounds can be conducted before inviting candidates for second-round interviews. These screening interviews can take place virtually and involve an assessment of essential skills, like programming and machine learning, along with a deep dive into the candidate’s experience, projects, career trajectory, and motivation to join the company. These screening rounds can be conducted by the data science team itself or outsourced to other companies, like HackerRank, HackerEarth, Triplebyte, or Karat. Onsite Interviews Once candidates have passed the screening interviews, the top candidates will be invited to a second interview, either virtually or in person. The data science manager has to take the lead in terms of coordinating with internal interviewers to confirm the schedule for the series of interviews that will assess the candidate’s skills, as described earlier. On the day of the second-round interviews, the hiring manager needs to help the candidate feel welcome and explain how the day will proceed. Some companies like to invite candidates to lunch with other team members, which breaks the ice by allowing the candidate to interact with potential team members in a social setting. Each interview in the series should start by having the interviewer introduce themself and provide a brief summary of the kind of work they do. Depending on the types of interviews and assessments the candidate has already been through, the rest of the interview could focus on the core skill set to be evaluated or other critical considerations. Wherever possible, interviewers should offer the candidate hints if they get stuck and otherwise try to make them feel comfortable with the process. The last five to ten minutes of each interview should be reserved for the candidate to ask questions to the interviewer. This is a critical component of second-round interviews, as the types of questions a candidate asks offer a great deal of information about how carefully they’ve considered the role. Before the candidate leaves, it’s important for the recruiter and hiring manager to touch base with the candidate again, inquire about their interview experience, and share time lines for the final decision. Technical Assessment It is common for there to be some sort of case study or technical assessment to get a better understanding of a candidate’s approach to problem solving, dealing with ambiguity and practical skills. This provides the company with good information about how the candidate may perform in the role It also is an opportunity to show the candidate what type of data and problems they may work on when working for you. Evaluating candidates After the second-round interviews and technical assessment, the hiring manager needs to coordinate a debrief session. In this meeting, every interviewer shares their views based on their experience with the candidate and offers a recommendation if the candidate should be hired or not. After obtaining the feedback from each member of the interview panel, the hiring manager also shares their opinion. If the candidate unanimously receives a strong hire or a strong no-hire signal, then the hiring manager’s decision is simple. However, there may be candidates who perform well in some interviews but not so well in others, and who elicit mixed feedback from the interview panel. In cases like this, the hiring manager has to make a judgment call on whether that particular candidate should be hired or not. In some cases, an offer may be extended if a candidate didn’t do well in one or more interviews but the panel is confident that the candidate can learn and upskill on the job, and is a good fit for the team and the company. If multiple candidates have interviewed for the same role, then a relative assessment of the different candidates should be considered, and the strongest candidate or candidates, depending on the number of roles to be filled, should be considered. While most of the interviews focus on technical data science skills, it’s also important for interviewers to use their time with the candidate to assess soft skills, like communication, clarity of thought, problem-solving ability, business sense, and leadership values. Many large companies place a very strong emphasis on behavioral interviews, and poor performance in this interview can lead to a rejection, even if the candidate did well on the technical assessments. Job Offer After the debrief session, the data science manager needs to make their final decision and share the outcome, along with a compensation budget, with the recruiter. If there’s no recruiter involved, the manager can move directly to making the candidate an offer. It’s important to move quickly when it comes to making and conveying the decision, especially if candidates are interviewing at multiple companies. Being fast and flexible in the hiring process gives companies an edge that candidates appreciate and take into consideration in their decision-making process. Once the offer and details of compensation have been sent to the candidate, it’s essential to close the offer quickly to prevent candidates from using your offer as leverage at other companies. Including a deadline for the offer can sometimes work to the company’s advantage by incentivizing candidates to make their decision faster. If negotiations stretch and the candidate seems to lose interest in the process, the hiring manager should assess whether the candidate is really motivated to be part of the team. Sometimes, it may move things along if the hiring manager steps in and has another brief call with the candidate to help remove any doubts about the type of work and projects. However, additional pressure on the candidates can often work to your disadvantage and may put off a skilled and motivated candidate in whom the company has already invested a lot of time and money. Conclusion In this article, you’ve looked at an overview of the process of hiring a data science team, including the roles and skills you might be hiring for, the interview process, and how to evaluate and make decisions about candidates. In a highly competitive data science job market, having a robust pipeline of talent, and a fast, fair, and structured hiring process can give companies a competitive edge. Related Blogs Published by Domino Data Lab Reproducibility is a cornerstone of the scientific method and ensures that tests and experiments can be reproduced by different teams using the same method. In the context of data science, reproducibility means that everything needed to recreate the model and its results such as data, tools, libraries, frameworks, programming languages and operating systems, have been captured, so with little effort the identical results are produced regardless of how much time has passed since the original project.

Reproducibility is critical for many aspects of data science including regulatory compliance, auditing, and validation. It also helps data science teams be more productive, collaborate better with nontechnical stakeholders, and promote transparency and trust in machine learning products and services. In this article, you’ll learn about the benefits of reproducible data science and how to ingrain reproducibility in every data science project. You’ll also learn how to cultivate an organizational culture that promotes greater reproducibility, accountability, and scalability. What does it mean to be reproducible? Machine learning systems are complex, incorporating code, data sets, models, hyperparameters, pipelines, third-party packages, model training and development configurations across machines, operating systems, and environments. To put it simply, reproducing a data science experiment is difficult if not impossible if you can’t recreate the exact same conditions used to build the model. To do that, all artifacts have to be captured and versioned in an accessible repository. That way when a model needs to be reproduced, the exact environment, using the exact training data and code, within the exact package combination can be recreated easily. Too often it's an archeological expedition that can take weeks or months (or potentially never) when the artifacts are not captured at the time of creation. While the focus on reproducibility is a phenomenon in data science, it has been a cornerstone of scientific research across all kinds of industries, including clinical and life sciences, healthcare, and finance. If your company is unable to produce consistent experimental results, that can significantly impact your productivity, waste valuable resources, and impair decision-making. Situations Where Reproducibility Matters In data science, reproducibility is especially vital for data scientists to apply the experimental findings to their own work. Regulatory Compliance In highly regulated industries like insurance, finance and life sciences, all aspects of a model have to be documented and captured to provide full transparency, justification and validation on how models are developed and used inside an organization. This includes the type of algorithm being used, why the algorithm has been selected and how the model has been implemented within the business. A big part of complying involves being able to exactly reproduce the results of a model at any time. Without a system for capturing the artifacts, code, data, environment, packages and tools used to build a model this can be a time consuming, difficult task. Model Validation In all industries models should be validated prior to deployment to ensure the results are repeatable, understood and the model will achieve its intended purpose. Too often this is a time intensive process with validation teams having to piece together the environment, tools, data and other artifacts that were used to create the model, which slows down moving a model into production. When an organization is able to reproduce a model instantly, validators can focus on their core function of ensuring the model is robust and accurate. Collaboration Data science innovation happens when teams are able to collaborate and compound knowledge. It doesn’t happen when they have to spend time painstakingly recreating a prior experiment or accidentally duplicate work. When all work is easily reproducible, and easily searched, it's easy to build on prior work to innovate. It also means that as team staffing changes, institutional knowledge doesn’t disappear. Ingraining Reproducibility in Data Science Projects Instilling a culture of reproducibility in data science across an organization requires a long-term strategy, technology investment, and buy-in from data and engineering leadership. In this section, you’ll learn about a few established best practices for conducting and promoting reproducible data science work in your industry. Version Control Version control refers to the process of tracking and managing changes to artifacts, like code, data, labels, models, hyperparameters, experiments, dependencies, documentation, as well as environments for training and inference. The building blocks of version control for data science are more complex than software projects, making reproducibility that much more difficult and challenging. For code, there are multiple platforms, like GitHub, GitLab, and Bitbucket, that can be used to store, update, and track code, like Python scripts, Jupyter Notebooks, and configuration files, in common repositories. However that isn’t sufficient. Datasets need to be captured and versioned as well. So do the environments, tools and packages. This is because code may or may not run the same on a different version of Python or R, for example. Data may have changed even if pulled with the same parameters. Similarly capturing different versions of models and corresponding hyperparameters for each experiment is important to reproduce and replicate the results of a winning model that might be deployed to production. Reproducing end-to-end data science experiments is a complex, technical challenge that can be achieved much more efficiently using platforms like Domino’s Enterprise MLOps platform which eliminates all manual work and ensures reproducibility at scale. Scalable Systems Building accurate and reproducible data science models requires robust and scalable infrastructure for data storage and warehousing, data pipelines, feature stores, model stores, deployment pipelines, and experiment tracking. For machine learning models that serve predictions in real time, the importance of reproducibility is even higher in order to quickly resolve bugs and performance issues. End-to-end machine learning pipelines involve multiple components, and an organizational strategy for reproducible data science work must carefully plan for the tooling and infrastructure to enable it. Engineering reproducible workflows requires sophisticated tooling to encompass code, data, models, dependencies, experiments, pipelines, and runtime environments. For many organizations, it makes sense to buy (vs. build) such scalable workflows focused on reproducible data science. Conclusion Reproducible research is a cornerstone of scientific research. Reproducibility is especially significant for cross-functional disciplines like data science that involve multiple artifacts, like code, data, models, and hyperparameters, as well as a diverse set of practitioners and stakeholders. Reproducing complex experiments and results is, therefore, essential for teams and organizations when making important decisions like which models to deploy, identifying root causes when the models break down, and building trust in data science work. Reproducing data science results requires a complex set of processes and infrastructure that is not easy or necessary for many teams and companies to build in-house. Related Blogs Published by Colabra Introduction

Effective communication skills are pivotal to success in science. From maximizing productivity at work through efficient teamwork and collaboration to preventing the spread of misinformation during global pandemics like Covid19, the importance of strong communication skills cannot be emphasized enough. However, scientists often struggle to communicate their work clearly for various reasons. Firstly, most academic institutes do not prioritize training scientists in essential soft skills like communication. With negligible organizational or departmental training and little to no feedback from professors and peers, scientists fail to fully appreciate the real-world importance and consequences of poor communication skills. The long scientific training period in the academic ivory tower is spent conversing with fellow scientists, with minimal interaction with non-technical professionals and the general public. Thus, the lingua franca among scientists is predominantly interspersed with jargon, leading to poor communication with non-scientists. This article will describe best practices and frameworks for professional scientists and non-scientists in commercial scientific enterprises to communicate effectively. How should scientists speak with non-scientists? IndustryThis section describes how professional scientists in industries like biotech and pharma can communicate better with cross-functional stakeholders from non-technical teams like sales, marketing, legal, business, product, finance, accounting, etc. Cross-functional collaboration In industry, scientists are often embedded in self-contained business or product teams with different roles. Taking a biotech product to market like a new drug, which has a long development cycle, involves extensive collaboration between specialists from multiple domains: research, quality assurance, legal and compliance, project management, risk and safety, vendor and supplier management, sales, marketing, logistics, and distribution, to name a few. Scientists are involved from the beginning of the process. However, scientists are often guilty of focusing solely on R&D without acutely considering how the science and technology underlying the product or business is operationalized by cross-functional teams and delivered to the market. Scientists are often less aware of the practical challenges of taking a drug prototype to the patient, such as long timelines due to multiple steps like risk management, safety reviews, regulatory approvals, coordination with pharmaceutical and logistics companies, and bureaucratic hurdles with governments and international bodies. This is a vital mistake in collaborative industry environments and often leads to poor job experience for scientists and their non-scientist peers and managers. The image below shows several communication challenges at the different stages of the drug development process that hinder successful commercialization. Although the various specialists share a common objective, each domain expert speaks a different “language” influenced by their respective training and fails to translate their opinions and concerns into a common language that all can understand. This comes in the way of optimal decision-making resulting in projects that stall even before demonstrating clinical efficacy. In an industry with a 90% drug development failure rate, poor communication and collaboration can be very expensive, to the tune of USD 1.3 billion per drug. The right culture is crucial to ensure successful outcomes, as advocated by AstraZeneca after a thorough review of their drug development pipeline. A recent real-world example pertains to the development of the AstraZeneca Covid-19 vaccine by multiple teams at the University of Oxford. Although the vaccine was developed within two weeks by February 2020, it was not until 30 December 2020 that the vaccine was finally approved for use in the UK, and it is even to date not authorized for use in the US. In particular, the AstraZeneca vaccine was subject to misinformation, fake news, and fear-mongering, which led to vaccine hesitancy and a lack of public trust. This led Drs. Sarah Gilbert and Catherine Green, co-developers of the vaccine, to author ‘Vaxxers,’ with the primary motivation to allay fears and reassure the general public about its safety and efficacy by explaining the science and process of creating the vaccine. Stakeholder management Another critical aspect of working with cross-functional teams involves managing key stakeholders to ensure a successful outcome for the project. Stakeholders often come from diverse non-scientific backgrounds, making working with them more challenging for scientists. The main challenge in effective stakeholder management is understanding the professional goals, metrics, and KPIs that drive each stakeholder. For instance, a product manager might focus on metrics like cost improvement over time, risk mitigation, or timelines; a finance leader may be focused on revenue; a compliance manager may be focused on metrics that capture safety and legal aspects. Understanding each cross-functional stakeholder’s north star can help scientists navigate the intricacies of stakeholder management. Effective stakeholder management involves numerous aspects: Identifying stakeholders The first step is to identify the stakeholders that are critical to the success of the scientific product and understand their motivations and priorities. Successful stakeholder management starts by mapping your stakeholders across several dimensions, including:

Aligning stakeholders Conflicting priorities among stakeholders are common and need to be resolved delicately. Achieving multi-stakeholder alignment for complex projects requires carefully planned discussions and negotiations to assess the lay of the land with each stakeholder and preempt potential conflicts. Focused group meetings that prioritize key points of disagreement or conflicting priorities can help achieve alignment and avoid conflicts. Engaging stakeholders After getting all the stakeholders aligned, it is useful to build a communication strategy to share project updates regularly. The communication plan must be tailored to each stakeholder. For example, individual contributors might need a high-touch approach, while project coordinators and administrators might just want periodic updates and high-level presentations. During the project's execution phase, continuous engagement and clear communication with the stakeholders are essential to keep everyone on the same page. Stakeholders may be involved in multiple biotech projects in parallel, and your project may not be their sole focus or priority. We have previously written about several modes of communication and project management apart from one-on-one meetings. At a minimum, it is beneficial to maintain a project status board detailing the progress of each milestone, metric, team, and timeline, especially to serve as a single source of truth, especially if some teams are working remotely. Entrepreneurship This section will discuss how aspiring startup founders with a scientific background should communicate and “sell” the company's mission to varied stakeholders from investors, employees, vendors, potential hires, and so on. Scientists with domain expertise and an entrepreneurial mindset are increasingly opting to build deep-tech startups soon after graduating from academia. From Genentech to Moderna and CRISPR Therapeutics to BioNTech, there is no shortage of successful biotech companies founded by scientists. However, building a commercially successful and viable biotech startup requires diverse skills with a much stronger need for excellent communication skills. Scientist founders need to have exceptional communication and sales skills to pitch the company to raise venture capital, write scientific grants, forge business partnerships with other companies, retain customers, attract talented employees with their vision for the company, give media interviews, and shape a mission-oriented organizational culture. Scientist-founders must communicate particularly well to bridge the gap between scientific research and commercialization. How should non-scientists speak with scientists? In this section, we will consider the viewpoint of non-scientists and how they can communicate more effectively with scientists. Non-scientists are typically more focused on product, business, sales, marketing, and related aspects of commercializing scientific research. The stakes for effective communication between scientists and managers are very high. This is best highlighted by NASA’s missions, which involve a diverse set of experts, both scientific and non-scientific, similar to the highly complex and multi-year projects described in the previous section. NASA’s failures on projects like the Columbia mission have been attributed to deficiencies in communication and insular company culture. Namely, management not heeding the scientists' and engineers’ warnings. These communication failures are expertly documented in a post-hoc report by the Columbia Accident Investigation Board – "Over time, a pattern of ineffective communication has resulted, leaving risks improperly defined, problems unreported, and concerns unexpressed," the report said. "The question is, why?" (source) Unfortunately, this state of affairs rings true even today in high-stakes and complex scientific enterprises. Here are some recommended tips that follow from such catastrophic mishaps and failures in workplace communication:

How can non-scientists better engage scientists? Non-scientist stakeholders' work largely focuses on business metrics, product roadmaps, customer research, project management, etc. These are critical focus areas that non-scientists need to update and communicate clearly to their scientist colleagues. In industry, it is common to observe scientist colleagues not actively participating in discussions focused on business topics and switch off until their work is the topic of discussion. It is crucial to engage scientists as they are on the front lines of core product development and in a better position to understand and flag potential roadblocks in manufacturing, commercialization, and logistics based on prior experience. Many product-related issues and bugs that surface later in the development cycle can be caught and addressed if there is more proactive communication between scientific and non-scientific teams. Scientists are generally trained to be conservative, focusing on accuracy and reliability, which can conflict with a manager’s ambitious goals for time-to-market or revenue targets. In these situations, managers should allow scientists to voice their concerns, not be afraid to dive deeper, coordinate with other cross-functional stakeholders, and take a balanced decision integrating every stakeholder’s views. In the long term, cultivating an open and progressive culture that encourages debates and tough discussions reaps enormous benefits whereby no business-critical concern is left unvoiced. A transparent and meritocratic culture promotes greater cooperation and understanding among different teams striving towards the same goals. Conclusion We discussed why scientists often struggle with effective communication with other scientists and non-scientist stakeholders when working in industry or building their own company. We addressed how scientists should approach communication with non-scientist colleagues and how to collaborate with them. We also discussed effective communication strategies from the perspective of non-scientists speaking to scientists. In the long run, having strong communication and soft skills confers greater career durability than simply having scientific and technical skills. Understanding this and upskilling accordingly can empower scientists to transition and perform well in industry. Published by Unbox.ai Introduction