|

Reach out - [email protected] for your AI B2B Marketing and Thought Leadership content requirements. AI: Leadership & Best Practices

AI: Data & Governance

AI: Use cases

Team development

Misc

Comments

Vector databases have recently gained prominence with the rise of large language models and generative AI. A vector database is a data store for unstructured text in the form of vector embeddings for various AI models and applications. Embeddings are a high dimensional vector representation of text that conveys rich semantic information and represent an efficient way of capturing unstructured data like text.

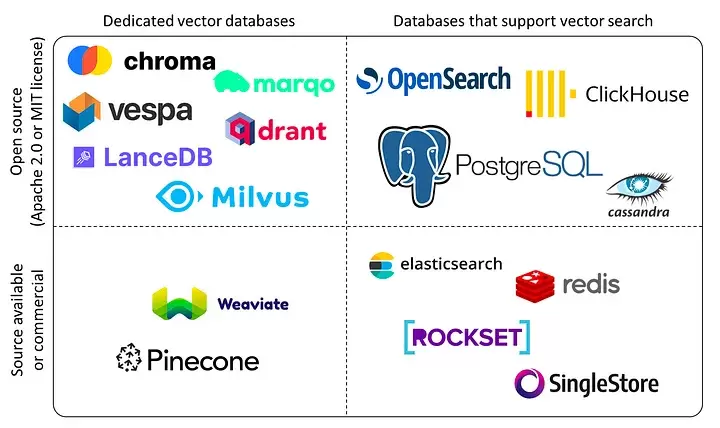

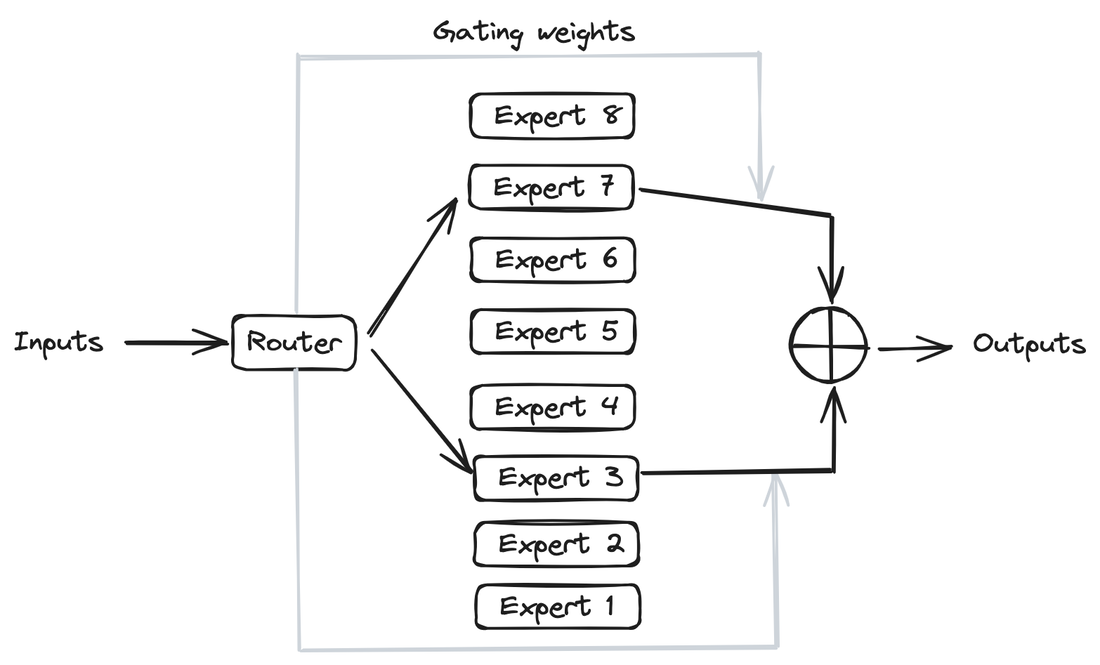

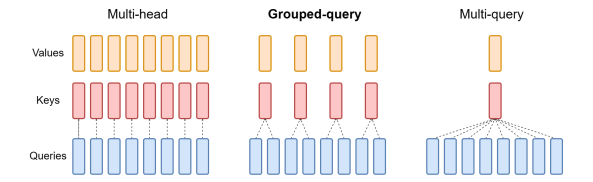

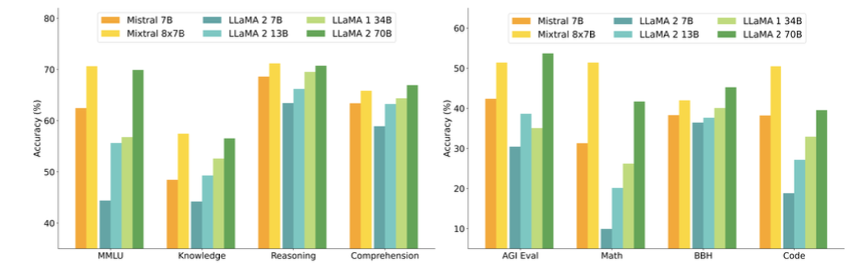

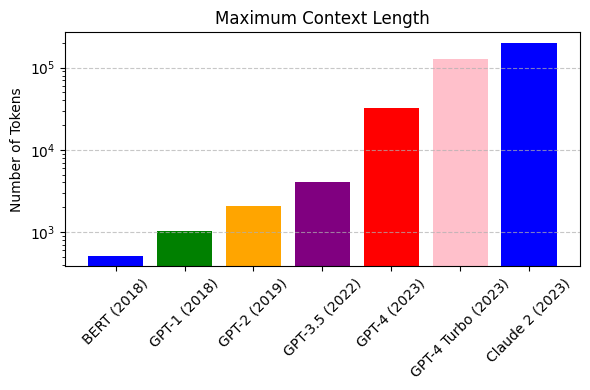

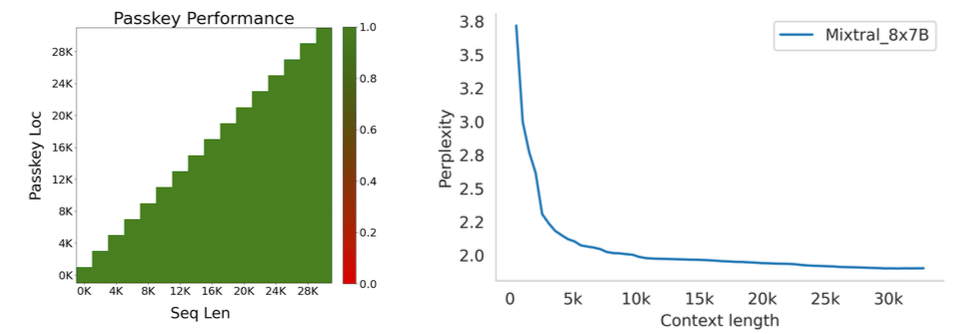

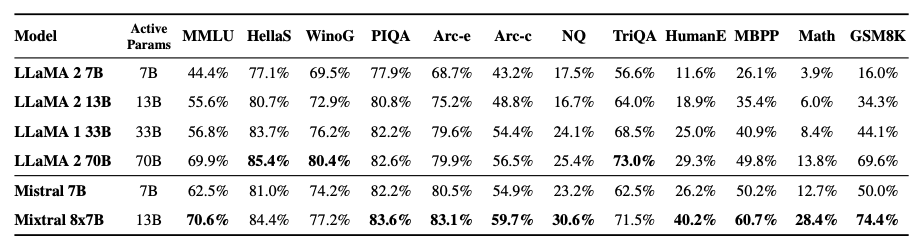

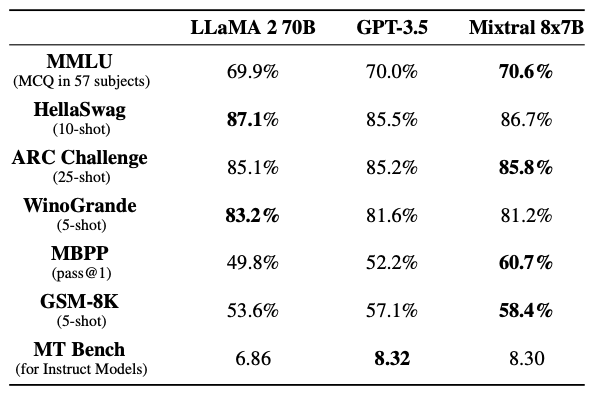

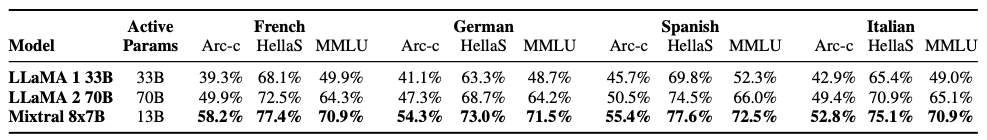

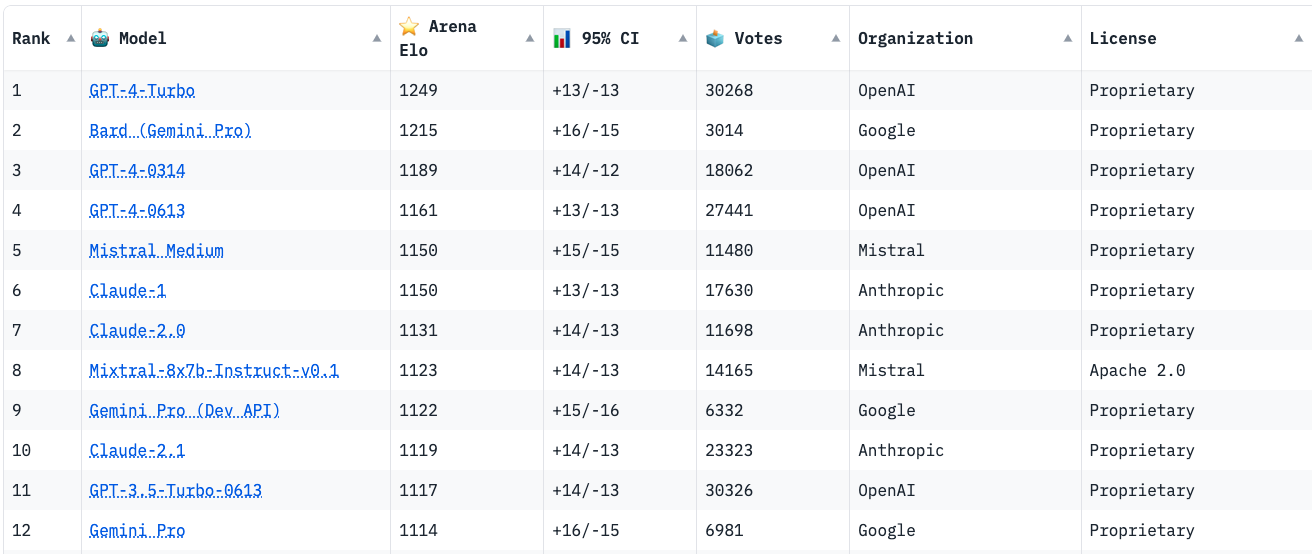

The rising popularity of large language models like GPT-4, Gemini, Claude-2, Llama-2, Mixtral and others have fuelled tremendous interest in generative AI across the industry to build applications based on these models. Vector databases are specialized for handling vector data that is used to train or fine-tune these foundational models for domain and company specific use cases. Unlike traditional scalar-based databases, vector databases offer optimized storage and querying capabilities for vector embeddings. Although several vector databases are now available in the market like Pinecone, Chroma, Qdrant amongst others, deciding which vector database to choose for enterprise use cases is not a straightforward decision. In this article, you will learn how to decide which vector database to choose for your organization based on criteria like performance, reliability, scalability, cost-efficiency, developer experience, security, technical support amongst others. Key Considerations In this section, you will learn in detail about each of the key factors that should be considered to make your final selection of a vector database. These include data and use case characteristics, performance, functionality, enterprise-readiness, developer experience, and future roadmap. 1. Data and Use Case It is important to work backwards from the specific business use case that you are planning to solve by leveraging organizational data and the latest techniques from the field of generative AI. For instance, if your business objective is to build an enterprise knowledge management chatbot like McKinsey’s Lilli, you will need to organize and prepare all the in-house text data such as documents, emails, chat messages etc. The use case defines several aspects of the data, including its size, frequency, data type, growth in the volume of data over time, data freshness and consequently the nature of the underlying vector embeddings to be stored in the vector database. These vectors may be sparse, dense, and also span multiple modalities depending on the use case. Additionally, careful planning and scoping of the use case also helps you understand other crucial aspects such as the number of users, the number of queries per day, the peak number of queries at any given instant, as well as the query patterns of the users. Vector databases utilize indexing and vector search powered by k-nearest neighbors (kNN) or approximate nearest neighbor (ANN) algorithms. This empowers a vector db to perform similarity search and identify the most similar vectors in the database. This capability underlies enterprise use cases based on natural language processing such as question-answering, document analysis, recommender systems, image and voice recognition etc. 2. Performance 2.1 Query latency and query per second (QPS) The primary performance metrics of a vector db are the query latency, i.e., the time it takes to run a query and get the result and the query per second that defines the throughput in terms of the number of queries processed in a second. These parameters are critical for ensuring a seamless user experience for several applications that require real-time results such as chatbots. Typical QPS values range from ~50-300 and the average query latency from 25-100 ms depending on the underlying hardware. 2.2 Scalability Scalability measures the ability of the vector database to grow and expand further to support the requirements of its customers. The scale can be measured in terms of the number of embeddings that can be supported and in terms of horizontal scaling of existing resources and vertical scaling of additional servers. Typically, most existing vector db companies provide scale-out capabilities up to a billion vectors without any performance degradation. If the resources can scale automatically, then you can be rest assured that your application will always be up and running. 2.3 Accuracy A vector database is as good as its accuracy of retrieving the right set of results based on the user queries. Here, the choice of vector search algorithms to identify data sources with similar embeddings as the embedding of the user query is pivotal. There are several different algorithms used for powering vector search such as kNN, ANN, FAISS, NGT. These algorithms generate approximate results and the best vector databases provide a good trade-off between speed and accuracy. 3. Functionality 3.1 Filtering on metadata In practice, filtering vector search results based on the metadata helps reduce the search space, thus providing for faster and more accurate search results. Typical metadata includes information like dates, versions, tags and the ability of a vector database to store multiple metadata fields allows for a better search experience. 3.2 Integrations Integrating a vector database into the existing data and engineering infrastructure in your organization is critical to faster adoption and lesser time to value. The ability of vector databases to seamlessly integrate with essential infrastructure elements like the cloud infrastructure, underlying large language models, databases etc. is a key factor to consider. 3.3 Cost-efficiency While performance metrics and functionality are core to a technology, the cost should be reasonable and fit your budget. The pricing of vector databases is a function of the number of ‘write’ operations such as update and delete and the number of queries. Other factors that affect the cost include the dimensionality of the embedding, the number of vectors stored in the database, and the size of the metadata. Depending on your use case and requirements, it is essential to estimate the overall cost of running your application at scale on a monthly or quarterly basis and evaluate the overall costs relative to your budget and the expected revenue from running the AI applications. 4. Enterprise-readiness 4.1 Security and compliance For most enterprise companies, it is imperative that any external vendor they employ meets strict security and compliance requirements. These requirements include SOC2, GDPR, HIPAA, ISO compliance and others, depending on the domain in which the company operates. The data privacy and security standards have gone up in the light of recent cybersecurity attacks and breaches of customer data, and you should ensure that any vector db vendor meets your specific security and compliance requirements. 4.2 Cloud setup Several modern companies have undergone digital transformation and house their entire data and infrastructure in the cloud vs on-premise. You may choose to manage and maintain your infrastructure via a self-hosted setup or go for a fully managed SaaS platform. The benefit of a fully managed system is that it automates clusters with minimal requirements for you to provision and scale clusters or take care of operational issues. 4.3 Availability Availability, i.e. the ability of your vector db to run without any interruptions, issues or downtime is essential to not adversely impact user experience. Most vector database providers vouch for specific SLAs which should meet the requirements for your applications. Typical values include 99.9% for uptime SLA and a few hours to a few business days for response time SLA depending on the severity of the production issue. 4.4 Technical support More often than not, you might be stuck facing some issues with your vector db and need some hands-on support from the vendor to help troubleshoot the issue. Does the company provide you with a dedicated team who can be available at a short notice to get on a call and figure out how to solve the problem? The quality of responsiveness and customer support experience provided by a vector db company is valuable and helps you develop a stronger sense of trust in the company. 4.5 Open source vs Closed source Some vector db companies are closed source and operate under a proprietary license such as Pinecone. At the same time, there are a host of vector db companies that are open source under the Apache 2.0 license such as Qdrant or Chroma while also offering a fully managed service. This can also influence your choice of the vector db provider. 5. Developer experience 5.1 Community Software and AI engineers are the core professionals who will work on the vector db and integrate it in the company’s infrastructure and deploy your generative AI application to production. Therefore, the quality of experience that developers have with a vector db solution is integral in shaping your final decision. Having an open-source community on Slack or Discord helps build more engagement and trust with developers than commercial vendor support. It provides your developers an opportunity to learn from developers at other companies as well and discuss and solve issues by leveraging the wisdom of the community. 5.2 Onboarding Onboarding a new technology is challenging as it determines the time your developer team takes to properly understand the product, integrate it, troubleshoot any issues, and become an expert in using the vector database. The availability of APIs and SDKs as well as clear product demos and documentation goes a long way in reducing the barriers to understanding a new vector database so that your developers can build with speed and confidence. 5.3 Time to value Similar to the time to onboard a new vector db, another important factor is the time to business value. If a vector db provider vouches for a fast deployment of a production-ready application, then you can realize value sooner, and meet your business goals faster as well. A long gestation time from onboarding to business value is a deterrent for many fast-moving companies and startups especially in the current frantic race to adopt and ship generative AI applications. 5.4 Documentation The quality of the vector database’s documentation determines the time to onboard, time to value, and trust in the provider’s expertise and product. Clear instructions with tutorials, examples and case studies help your developers understand and master the vector db faster. 5.5 User education Similar to community-based offerings, expert technical content such as blogs, demos and videos focused on the existing as well as new features are helpful for your team to understand and build faster. In addition to text and video content, other offerings like user testimonials, workshops, conferences also help educate your team and build more trust in the vector db provider. 6. Future roadmap A final factor to consider is the product roadmap of the vector database provider. Vector databases are an emerging technology that will need to continuously evolve alongside the advances in generative AI models, chip design and hardware, and novel enterprise use cases across domains. Therefore, the vector db vendor should show the potential for evaluating long-term and future industry trends such as sophisticated vectorization techniques for a wider variety of data types, hybrid databases, optimized hardware accelerators for AI applications such as GPUs and TPUs, distributed vector dbs, real-time and streaming data based applications, as well as industry-specific solutions that might require advance data privacy and security. Conclusion Vector databases are an essential ingredient for modern generative AI applications built on unstructured data such as text. Their popularity has increased in parallel to the developments in the generative AI field such as large language models, large image models etc. to serve as the underlying database for handling high-dimensional data stored as vector embeddings. In this article, you learned about several important pillars to help your decision making about the choice of the vector database. These factors include data and use case considerations, performance-based requirements such as query speed and scalability, functionality requirements such integrations and cost-efficiency, enterprise-readiness including security and compliance, and developer experience including community and documentation. Several vector database companies have emerged to build this foundational infrastructure. There is no single ‘best’ vendor of vector db and the ultimate choice is highly contingent on your organization’s business goals. Therefore, a data-driven approach guided by the factors listed in this article will help you select the most optimal vector db for your organization. 1. Introduction Mistral is a pioneering French AI startup that launched their own foundational large language model, called Mistral 7B in September 2023. As of the date of launch, it was the best 7 billion parameter language model, outperforming even larger language models like Llama 2 of size 13 billion parameters across multiple benchmarks. In addition to its performance, Mistral 7B is also popular as the model is open-sourced under the Apache 2.0 license with the model weights available for download. Mixtral 8x7B (hereafter, referred to as “Mixtral”) is the latest model released by Mistral in January 2024 and represents a significant extension of their prior work on Mistral 7B. It is a 7B Sparse Mixture of Experts (SMoE) language model with stronger capabilities than Mistral 7B. It uses 13B active parameters during inference out of a total of 47B parameters, and supports multiple languages, code, and 32k context window. In this blog, you will learn about the details of the Mixtral language model architecture, its performance on various standard benchmarks vis-a-vis state-of-the-art large language models like Llama 1 and 2 and GPT3.5, as well as potential use cases and applications. 2. Mixtral Mixtral is a mixture-of-experts network, similar to [GPT4]. While GPT4 is said to constitute 8 expert models of 222B parameters each, Mixtral is a mixture of 8 experts of 7B parameters each. Thus, Mixtral only requires a subset of the total parameters during decoding, thus allowing faster inference speed at low batch sizes and higher throughput at large batch sizes. 2.1 Sparse Mixture of Experts Figure 1 illustrates the Mixture of Experts (MoE) layer. Mixtral has 8 experts, and each input token is routed to two experts with different sets of weights. The final output is a weighted sum of the outputs of the expert networks, where the weights are determined by the output of the gating network. The number of experts (n) and the top K experts are hyperparameters that are set to 8 and 2 respectively. The number of experts, n determines the total or sparse parameter count while K determines the number of active parameters used for processing each input token. The MoE layer is applied independently per input token in lieu of the feed-forward sub-block of the original Transformer architecture. Each MoE layer can be run independently on a single GPU using a model parallelism distributed training strategy. 2.2 Mistral 7B Mixtral’s core architecture is similar to Mistral 7B, and therefore, a review of its architecture is relevant for a more comprehensive understanding of Mixtral. Mistral 7B is based on the Transformer architecture. In comparison to Llama, it has a few novel features that contribute to it surpassing Llama 2 (13B) on various benchmarks. 2.2.1 Grouped-Query Attention Grouped-Query Attention (GQA) is an extension of multi-query attention, which uses multiple query heads but single key and value heads. Popular language models like PaLM employ multi-query attention. GQA represents an interpolation between multi-head and multi-query attention with single key and value heads per subgroup of query heads. As shown in figure 2, GQA divides query heads into G groups, each of which shares a single key and query head. It is different to multi-query attention which shares single key and value heads across all query heads. GQA is an important feature as it significantly accelerates the speed of inference and also reduces the memory requirements during decoding. This enables the models to scale to higher batch sizes and higher throughput, which is a critical requirement for real-time AI applications. 2.2.2 Sliding Window Attention Sliding window attention (SQA), introduced in the Longformer architecture exploits the stacked layers of a Transformer to attend to information beyond the typical window size. SWA is designed to attend to a much longer sequence of tokens than vanilla attention, and also offers significant reductions in computational cost. The combination of GQA and SWA collectively enhance the performance of Mistral 7B and therefore Mixtral relative to other language models like the Llama series. 3. Performance 3.1 Standard benchmarks The authors of Mixtral benchmarked the performance of the model on a range of standard benchmarks and evaluated the accuracy of Mixtral versus leading language models like Llama 1, Llama 2, and GPT3.5 as shown in figure 3, table 1, and table 2. In summary, Mixtral is better than much larger language models with up to 70B parameters like Llama 2 70B while only using 13B (~18.5%) of the active parameters during inference. Mixtral’s performance is especially superior in tasks focused on mathematics, code generation, as well as multilingual comprehension. 3.2 Multilingual understanding Table 3 shows the performance of Mixtral versus Llama models on multilingual benchmarks. As Mixtral was pretrained with a significantly higher proportion of multilingual data, it is able to outperform Llama 2 70B on multilingual tasks in French, German, Spanish, and Italian while being comparable in English. 3.3 Long-range performance As shown in figure 4, the input context length of language models has increased by several orders of magnitude in the last few years - from 512 tokens for the BERT model to 200k tokens for Claude 2. However, most large language models struggle to efficiently use the longer context. Nelson and colleagues showed that current language models do not robustly make use of information in long input contexts, and their performance is typically highest when the relevant information for tasks such as question-answering or key-value retrieval occurs at the beginning or the end of the input context, with significantly degraded performance when the the models need to access information in the middle of long contexts. Mixtral, which has a context size of 32k tokens, overcomes this deficit of large language models and shows 100% retrieval accuracy regardless of the context length or the position of the key to be retrieved in a long context. The perplexity, a metric that captures the capability of a language model to predict the next word given the context, decreases monotonically as the context length increases. Lower perplexity implies higher accuracy, and the Mixtral model is therefore capable of extremely good performance on tasks based on long context lengths as shown in figure 5. 4. Instruction Fine-tuning Instruction tuning refers to the process of further training large language models on a curated dataset containing (instruction, output) pairs of training samples. Instruction tuning is a computationally efficient method for extending the capabilities of large language models in diverse domains without extensive retraining or architectural changes. “Mixtral - Instruct” model was fine-tuned on an instruction dataset followed by Direct Preference Optimization (DPO) on a paired feedback dataset. DPO is a technique to optimize large language models to adhere to human preferences without explicit reward modeling or reinforcement learning. As of January 26, 2024, on the standard LMSys Leaderboard, Mixtral - Instruct continues to be the best performing open-source large language model. This leaderboard is a crowdsourced open platform for evaluating large language models that ranks models following the Elo ranking system in chess. Mixtral - Instruct only ranks below proprietary models like OpenAI’s GPT-4, Google’s Bard and Anthropic’s Claude models, while being a significantly small model. This extremely strong performance of Mixtral - Instruct and with an open-source friendly Apache 2.0 license opens up the possibility for tremendous adoption of Mixtral for both commercial and non-commercial applications. It represents a much more powerful alternative to Llama 2 70B that is already being used as the foundational model for extending large language models to other languages like Hindi or Tamil that are spoken widely but not adequately represented in the training dataset of these large language models. 5. Use Cases

Mixtral represents the numero uno of open-source large language models as it clearly outperforms the previous best open-source model, Llama 2 70B, by a significant margin, while providing for faster and cheaper inference. At the time of writing this article, Mixtral has been available in the open-source for less than two months and we are yet to see many examples of how it is being used in the industry. However, there are some early movers, like the Brave browser that has already incorporated Mixtral in its AI-based browser assistant, Leo. Mixtral is also incorporated by Brave for powering its [programming-related queries in Brave Search. It is only a matter of time before Mixtral witnesses widespread adoption across industry for a variety of use cases and challenges the hegemony of proprietary models like OpenAI’s GPT-4 and the likes. 6. Conclusion Mixtral is a cutting-edge, mixture-of-experts model with state-of-the-art performance among open-source models. It consistently outperforms Llama 2 70B on a variety of benchmarks while having 5x fewer active parameters during inference. It thus allows for a faster, more accurate and cost-effective performance for diverse tasks including mathematics, code generation, as well as multilingual understanding. Mixtral - Instruct also outperforms proprietary models such as Gemini-Pro, Claude-2.1, GPT-3.5 Turbo on human evaluation benchmarks. Mixtral thus represents a powerful alternative to the much larger and more compute intensive Llama 2 70B as the de facto best open-source model, and will facilitate development of new methods and applications benefitting a wide variety of domains and industries. Published by Pachyderm MLOps refers to the practice of delivering machine-learning models through repeatable and efficient workflows. It consists of a set of practices that focuses on various aspects of the machine-learning lifecycle, from the raw data to serving the model in production.

Despite the routine nature of many of these MLOps tasks, it’s not uncommon for several steps to still be processed manually, incurring massive ongoing maintenance costs. Your organization can benefit tremendously from automating MLOps to achieve efficiency, reliability, and cost-effectiveness at scale. For example, automation could:

However, many companies lack the capabilities, talent, and infrastructure to drive machine-learning models to production reliably and efficiently. This not only means wasted time and resources but also hinders adoption and trust in AI. The sooner that companies of any size, enterprise and startups alike, invest in automating their MLOps processes to expedite delivery of machine-learning models, the sooner they can meet their business goals. So, let’s talk about six methods for automating MLOps that can help streamline the continuous delivery of machine-learning models to production. 1. Automated Data-driven Pipelines Delivering a machine-learning model involves numerous steps, from processing the raw data to serving the model to production. Machine-learning pipelines consist of several connected components that can execute automatically in an independent and modular fashion. For instance, different pipelines can focus on data processing, model training, and model deployment. When it comes to machine learning, data is as or more important than code; pipelines track changes in training data and automatically trigger pipelines for processing new or changed data. Such automated data-driven pipelines kickstart further iterations of data processing and model training based on the new datasets. Without automated pipelines, the data science team executes these steps manually. This inevitably leads to manual errors, production delays, and lack of visibility of the overall pipeline for relevant stakeholders. Manually built pipelines are harder to troubleshoot when defects creep into production, and so compound technical debt for the MLOps team. Automating pipelines can significantly reduce manual effort and free up organizational time, resources, and bandwidth so your MLOps team can focus on other challenges. 2. Automated Version Control In the realm of software engineering, version control refers to the tracking of changes in code, making it easier to monitor, troubleshoot and collaborate among large teams. In machine learning, the need for version control applies to data as well as code. Version control is especially critical for machine-learning applications in domains like healthcare and finance that have a higher burden of model explainability, data privacy, and compliance. Automating version control for machine learning ensures that the history of the different moving parts—code, data, configurations, models, pipelines—is centrally maintained and fully automated. Through automated version control, your MLOps team has a more efficient ability to trace bugs, roll back changes that didn’t work, and collaborate with greater transparency and reliability. 3. Automated Deployment Large data science organizations develop multiple models trained on structured and unstructured data for various use cases. Some of these models need to make predictions in real-time at ultra-low latencies while others may be invoked less often or serve as inputs to other models. All these models need to be periodically retrained to improve performance and mitigate challenges due to data drift. Deploying models manually in such a complex business environment is highly inefficient and time consuming. Manual deployment is cumbersome and can cause serious errors that impacts model serving and the quality of model predictions. This often leads to poor customer experience and customer churn. Deployment of models to production involves several steps. It starts with choosing multiple environments and services for staging the model, selecting appropriate servers that can handle the production traffic, and pushing the model forward to production. It then includes monitoring model performance and data drift, automating model retraining with more recent data and inputs, and ensuring the reliability of the models through better testing and security. Automating these steps yields several benefits:

4. Automated Feature Selection for Model Training Classical machine-learning models are trained on data with hundreds to thousands of features, ie, key variables in the dataset that are often correlated with model performance. Choosing a set of features that significantly account for the predictive power of the trained models is therefore essential. Feature selection by hand is cumbersome and requires significant subject matter expertise. Automating feature selection not only helps train the machine-learning model faster on a smaller dataset but also makes the model easier to interpret. Selecting fewer features but with high feature importance is critical in the preparation of training data. Automated feature selection helps reduce the size of the model to make faster predictions, or to increase the speed of training your machine learning or deep learning model. Feature selection can be automated using either unsupervised learning techniques, like principal component analysis, or supervised methods using statistical tests like f-test, t-test, or chi-squared tests. 5. Automated Data Consistency Checks A central focus of data-centric AI is the quality of data used to train machine-learning models. Data quality determines the accuracy of the models, which in turn impacts business decision-making. So the underlying data must have minimal errors, inconsistencies, or missing values. Simplify the challenge of ensuring data quality and consistency by automating unit tests that check data types, expected values, missing cells, column and row names, and counts. Consider extending your automation to the analysis and reporting of the statistical properties of relevant features. If the training dataset consists of a few thousand to millions of samples and hundreds to thousands of features, you can’t manually evaluate every row and column for data consistency. Automated routines that test for different types of data inconsistencies makes it easier to eliminate poor quality data. 6. Automated Script Shortcuts Processing data and training machine-learning models involves a lot of boilerplate code. Automate the creation of scripts for common tasks to save time and effort while providing better visibility and version control. Typically, data scientists and machine-learning engineers create their own unique automations and shortcuts, which are seldom shared among the larger team. However, having a centralized repository of script shortcuts reduces the need to improvise, and perhaps even avoids a team member reinventing the wheel. Save these shortcuts as executable bash scripts for different use cases like downloading data from data lakes or uploading model artifacts in backup folders. Automate MLOps with Pachyderm Fortunately, you don’t have to build these MLOps automation features in-house from scratch. Pachyderm is a software platform that integrates with all the major cloud providers to continuously monitor changes in data at the level of individual files. Whenever any existing file is modified or new files are added to a training dataset, Pachyderm triggers events for pipelines and launches a new iteration of data transformation, testing data quality, or model training. Pachyderm can take care of automated version control and lineage for data as well as [deployment](https://www.pachyderm.com/events/how-to-build-a-robust-ml-workflow-with-pachyderm-and-seldon/. It also enables autoscaling and parallel processing on Kubernetes, orchestrating server resources for deployment at scale. Conclusion With a lot of the machine learning lifecycle still handled manually across the industry, consider automating any of the six MLOps tasks we covered here in order to achieve efficiency and reliability at scale:

A data science organization’s level of automation across its machine-learning lifecycle indicates its maturity. The velocity of training and delivering new machine-learning models to production increases significantly with that maturity, leading to faster realization of business impact. Pachyderm, a leading enterprise-grade data science platform, helps make explainable, repeatable, and scalable machine learning systems a reality. Its automated data pipeline and versioning tools can power complex data transformations for machine learning while remaining cost effective. Introduction

Traditional machine learning is based on training models on data sets that are stored in a centralized location like an on-premise server or cloud storage. For domains like healthcare, privacy and compliance issues complicate the collection, storage, and sharing of critical patient and medical data. This poses a considerable challenge for building machine learning models for healthcare. Federated learning is a technique that enables collaborative machine learning without the need for centralized training data. A shared machine learning model is trained by keeping all the training data on a device, thereby ensuring higher levels of privacy and security compared to the traditional machine learning setup where data is stored in the cloud. This technique is especially useful in domains with high security and privacy constraints like healthcare, finance, or governance. Users benefit from the power of personalized machine learning models without compromising their sensitive data. This article describes federated learning and its various applications with a special focus on healthcare. How Does Federated Learning Work? This section discusses in detail how federated learning works for a hypothetical use case of a number of healthcare institutions working collaboratively to build a deep learning model to analyze MRI scans. In a typical federated learning setup, there’s a centralized server, for instance, in the cloud, that interacts with multiple sources of training data, such as hospitals in this example. The centralized server houses a global deep learning model for the specific use case that is copied to each hospital to train on its own data set. Each hospital in this setup trains the global deep learning model locally for a few iterations on its internal data set and sends the updated version of the model back to the centralized server. Each model update is then sent to the cloud server using encrypted communication protocols, where it’s averaged with the updates from other hospitals to improve the shared global model. The updated parameters are then shared with the participating hospitals so that they can continue local training. In this fashion, the global model can learn the intricacies of the diverse data sets stored across various partner hospitals and become more robust and accurate. At the same time, the collaborating hospitals never have to send their confidential patient data outside their premises, which helps ensure that they don’t violate strict regulatory requirements like HIPAA. The data from each hospital is secured within its own infrastructure. This unique federated learning setup is easily scalable and can accommodate new partner hospitals; it also remains unaffected if any of the existing partners decide to exit the arrangement. Use Cases for Federated Learning in Healthcare Federated learning has immense potential across many industries, including mobile applications, healthcare, and digital health. It has already been used successfully for healthcare applications, including health data management, remote health monitoring, medical imaging, and COVID-19 detection. As an example of its use for mobile applications, Google used this technique to improve Smart Text Selection on Android mobile phones. In this use case, it enables users to select, copy, and use text quickly by predicting the desired word or sequence of words based on user input. Each time a user taps to select a piece of text and corrects the model’s suggestion, the global model receives precise feedback that’s used to improve the model. Federated learning is also relevant for autonomous vehicles to improve real-time decision-making and real-time data collection about traffic and roads. Self-driving cars require real-time updates, and the above types of information can be effectively pooled from several vehicles in real time using federated learning. Privacy and Security With increased focus on data privacy laws from governments and regulatory bodies, protecting user data is of utmost importance. Many companies store customer data, including personally identifiable information such as names, addresses, mobile numbers, email addresses, etc. Apart from these static data types, user interactions with companies such as chat, emails, and phone calls also carry sensitive details that need to be protected from hackers and malicious attacks. Privacy-enhancing technologies like differential privacy, homomorphic encryption, and Many startups and large tech companies are investing heavily in privacy technologies like federated learning to ensure that customers have a pleasant user experience without their personal data being compromised. In the healthcare industry, federated learning is a promising technology that allows, for example, hospitals to share electronic health records (EHR) to create more accurate models. Privacy is preserved without violating strict HIPAA standards by decentralizing the data processing, which is distributed among multiple end-points instead of being managed from a central server. Simply put, federated learning allows training of machine learning models without the need to collect raw data in a central location; instead, the data used by each end-point (in this example, hospitals) remains local. By combining the above with differential privacy, hospitals can even provide a quantifiable measure of data anonymization. Federated Learning vs. Distributed Learning and Edge Computing Federated learning is often confused with distributed learning. In the context of deep learning, distributed training is used to train large, deep neural networks across a number of GPUs or machines. However, distributed learning relies on centralized training data shared across multiple nodes to increase the speed of model training. Federated learning, on the other hand, is based on decentralized data stored across a number of devices and produces a central, aggregate model. A fascinating example of the potential of this technology is using federated learning-based Person Movement Identification (PMI) through wearable devices for smart healthcare systems. Edge computing is a related concept where the data and model are centralized in the same individual device. Edge computing doesn’t train models that learn from data stored across multiple devices, as in the case of federated learning. Instead, a centrally trained model is deployed on an edge device, where it runs on data collected from that device. For example, edge computing is applied in the context of Amazon Alexa devices, where a wake word detection model is stored on the device to detect every utterance of “Alexa.” AI and Healthcare Federated machine learning has a strong appeal for healthcare applications. By design, patient and medical data is highly regulated and needs to adhere to strict security and privacy standards. By collating data from participating healthcare institutions, organizations can ensure that confidential patient data doesn’t leave their ecosystem; they can also benefit from machine learning models trained on data across a number of healthcare institutions. Large hospital networks can now work together and pool their data to build AI models for a variety of medical use cases. With federated learning, smaller community and rural hospitals with fewer resources and lower budgets can also benefit and provide better health outcomes to more of the population. This technique also helps to capture a greater variety of patient traits, including variations in age, gender, and ethnicity, which may vary significantly from one geographic region to another. Machine learning models based on such diverse data sets are likely to be less biased and more likely to produce more accurate results. In turn, the expert feedback of trained medical professionals can help to further improve the accuracy of the various AI models. Federated learning, therefore, has the potential to introduce massive innovations and discoveries in the healthcare industry and bring novel AI-driven applications to market and patients faster. Conclusion Federated learning enables secure, private, and collaborative machine learning where the training data doesn’t leave the user device or organizational infrastructure. It harnesses diverse data from various sources and produces an aggregate model that’s more accurate. This technique has introduced significant improvements in information sharing and increased the efficacy of collaborative machine learning between hospitals. It circumvents and overcomes the challenges of working with highly sensitive medical data while leveraging the power of state-of-the-art machine learning and deep learning. Related Blogs

Web3 is the third generation of the internet based on emerging technologies like blockchains, tokens, DAOs, digital assets, decentralised finance that has the potential to give back control of digital assets back to the users with greater trust and transparency.

Typical web3 applications focus on DAOs, DeFi, Stablecoins, Privacy and digital infrastructure, the creator economy amongst others. The web3 ecosystem represents a promising green space for creators, developers, and various types of tech and non-tech professionals as well. In my talk (video and slides shared above) for Crater's Encrypt 2022 hackathon, I describe how AI can be leveraged to build commercially viable web3 applications for India. I cover a number of relevant AI/ML datasets, models, resources and applications for these domains, recognized by the Ministry of Electronics and Information Technology's National Strategy on Blockchain:

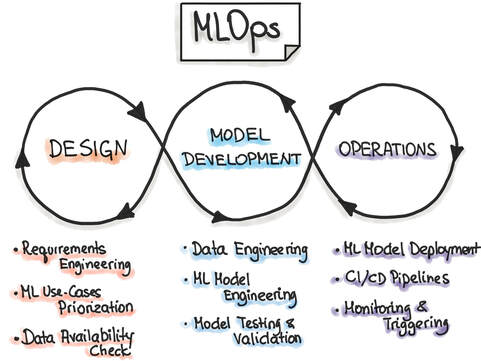

Related Blogs Machine learning operations (MLOps) refer to the emerging field of delivering machine learning models through repeatable and efficient workflows. The machine learning lifecycle is composed of various elements, as shown in the figure below. Similar to the practice of DevOps for managing the software development lifecycle, MLOps enables organizations to smooth the path to successful AI transformation by providing an engineering and technological backbone to underlying machine learning processes.

MLOps is a relatively new field, as the commercial use of AI at scale is itself a fairly new practice. MLOps is modeled on the existing field of DevOps, but in addition to code, it incorporates additional components, such as data, algorithms, and models. It includes various capabilities that allow the modern machine learning team, comprising data scientists, machine learning engineers, and software engineers, to organize the building blocks of machine learning systems and take models to production in an efficient, reliable, and reproducible fashion. MLOps tools MLOps is carried out using a diverse set of tools, each catering to a distinct component of the machine learning pipeline. Each tool under the MLOps umbrella is focused on automation and enabling repeatable workflows at scale. As the field of machine learning has evolved over the last decade, organizations are increasingly looking for tools and technologies that can help extract the maximum return from their investment in AI. In addition to cloud providers, like AWS, Azure, and GCP, there are a plethora of start-ups that focus on accommodating varied MLOps use cases. In this article, I will cover tools for the following MLOps categories:

In the following section, I will list a selection of MLOps tools from the above categories. It is important to note that although a particular tool might be listed under a specific category, the majority of these tools have evolved from their initial use case into a platform for providing multiple MLOps solutions across the entire ML lifecycle. Metadata Management Building machine learning models involves many parameters associated with code, data, metrics, model hyperparameters, A/B testing, and model artifacts, among others. Reproducing the entire ML workflow requires careful storage and management of the above metadata. Featureform Featureform is a virtual feature store. It can integrate with various data platforms, and it enables the management and governance of the data from which features are built. With a unique, feature-first approach, Featureform has built a product called Embeddinghub, which is a vector database for machine learning embeddings. Embeddings are high-dimensional representations of different kinds of data and their interrelationships, such as user or text embeddings, that quantify the semantic similarity between items. MLflow MLflow is an open-source platform for the machine learning lifecycle that covers experimentation and deployment, and it also includes a central model registry. It has four principal components: Tracking, Projects, Models, and Model Registry. In terms of metadata management, the MLflow Tracking API is used for logging parameters, code, metrics, and model artifacts. Versioning For machine learning systems, versioning is a critical feature. As the pipeline consists of various data sets, labels, experiments, models, and hyperparameters, it is necessary to version control each of these parameters for greater accessibility, reproducibility, and collaboration across teams. Pachyderm Pachyderm provides a data layer for the machine learning lifecycle. It offers a suite of services for data versioning that are organized by data repository, commit, branch, file, and provenance. Data provenance captures the unique relationships between the various artifacts, like commits, branches, and repositories. DVC DVC, or Data Version Control, is an open-source version control system for machine learning projects. It includes version control for machine learning data sets, models, and any intermediate files. It also provides code and data provenance to allow for end-to-end tracking of the evolution of each machine learning model, which promotes better reproducibility and usage during the experimentation phase. Experiment Tracking A typical machine learning system may only be deployed after hundreds of experiments. To optimize the model performance, data scientists perform numerous experiments to identify the most appropriate set of data and model parameters for the success criteria. Managing these experiments is paramount for staying on top of the data science modeling efforts of individual practitioners, as well as the entire data science team. Comet Comet is a machine learning platform for managing and optimizing the entire machine learning lifecycle, from experiment tracking to model monitoring. Comet streamlines the experimentation workflow for data scientists and enables clear tracking and visualization of the results of each experiment. It also allows side-by-side comparisons of experiments so users can easily see how model performance is affected. Weights & Biases Weights & Biases is another popular machine learning platform that provides a host of services, including [experiment tracking](https://wandb.ai/site/experiment-tracking). It facilitates tracking and visualization of every experiment, allows rerunning previous model checkpoints, and can monitor CPU and GPU usage in real time. Model Deployment Once a machine learning model is built and tests have found it to be robust and accurate enough to go to production, the model is deployed. This is an extremely important aspect of the machine learning lifecycle, and if not managed well, it can lead to errors and poor performance in production. AI models are increasingly being deployed across a range of platforms, from on-premises servers to the cloud to edge devices. Balancing the trade-offs for each kind of deployment and scaling the service up or down during critical periods are very difficult tasks to achieve manually. A number of platforms provide model deployment capabilities that automate the entire process of taking a model to production. Seldon Seldon is a model deployment software that helps enterprises manage, serve, and scale machine learning models in any language or framework on Kubernetes. It’s focused on expediting the process to take a model from proof of concept to production, and it’s compatible with a variety of cloud providers. Kubeflow Kubeflow is an open-source system for productionizing models on the Kubernetes platform. It simplifies machine learning workflows on Kubernetes and provides greater portability and scalability. It can run on any hardware and infrastructure on which Kubernetes is running, and it is a very popular choice for machine learning engineers when deploying models. Monitoring Once a model is in production, it is essential to monitor its performance and log any errors or issues that may have caused the model to break in production. Monitoring solutions enable setting thresholds as indicators for robust model performance and are critical in solving for known issues, like data drift. These tools can also monitor the model predictions for bias and explainability. Fiddler Fiddler is a machine learning model performance monitoring software. To ensure expected model performance, it monitors data drift, data integrity, and anomalies in the data. Additionally, it provides model explainability solutions that help identify, troubleshoot, and understand underlying problems and causes of poor performance. Evidently Evidently is an open-source machine learning model monitoring solution. It measures model health, data drift, target drift, data integrity, and feature correlations to provide a holistic view of model performance. Conclusion MLOps is a growing field that focuses on organizing and accelerating the entire machine learning lifecycle through best practices, tools, and frameworks borrowed from the DevOps philosophy of software development lifecycle management. With machine learning, the need for tooling is much greater, as machine learning is built on foundational blocks of data and models, as well as code. To bring reliability, maturity, and scale to machine learning processes, a diverse set of MLOps tools are being increasingly used. These tools are developed for optimizing the nuts and bolts of machine learning operations, including metadata management, versioning, model building and experiment tracking, model deployment, and monitoring in production. Over the past decade, the field of AI and machine learning has grown rapidly, with organizations embracing AI and recognizing its critical importance for transforming their business. The field of MLOps is still young, but the creation and adoption of tools will further empower organizations in their journey of AI transformation and value creation. Related Blogs Published by CloudForecast Introduction

Amazon Redshift is a widely used cloud data warehouse that is used by many businesses, like Nasdaq, GE, and Zynga, to process analytical queries and analyze exabytes of data across databases, data lakes, data warehouses, and third-party data sets. There are multiple use cases for Redshift, including enhancing business intelligence capabilities, increasing developer and analyst productivity, and building machine learning models for predictive insights, like demand forecasting. Amazon Redshift can be leveraged by modern data-driven organizations to vastly improve their data warehousing and analytics capabilities. However, the pricing for Redshift services can be challenging to understand, with multiple criteria that define the total cost. In this article, you’ll learn about Amazon Redshift and its pricing structure, with suggestions for how to optimize costs. What Is Amazon Redshift? Essentially, Amazon Redshift provides analytics over multiple databases and offers high scalability in a secure and compliant fashion. Additionally, there is a serverless option called Amazon Redshift Serverless that makes it even easier to rapidly scale analytics setup without requiring a managed data warehouse infrastructure. It helps with data democratization and assists various data stakeholders to extract data insights by simply loading and querying data in the warehouse. Amazon Redshift Pricing In this section, you’ll learn about Amazon Redshift’s capabilities as it pertains to usage and pricing. Free Tier For new enterprise users, the AWS Free Tier provides a free two-month trial of the DC2.Large node. This free service includes 750 hours per month, which is sufficient to run a single DC2.Large node with 160GB of compressed solid-state drives (SSD). On-Demand Pricing When you launch an Amazon Redshift cluster, you select a number of nodes in a specific region as well as their instance type to run your data warehouse. In on-demand pricing, a simple hourly rate applies based on the previous configuration and is billed as long as the cluster is live. The typical hourly rate for a DC2.Large node is $0.25 USD per hour. Redshift Serverless Pricing With Amazon Redshift Serverless, costs accrue only when the data warehouse is active and is measured in units of Redshift Processing Units (RPUs). You’re charged in terms of RPU-hours on a per-second basis. The serverless configuration also includes concurrency scaling and Amazon Redshift Spectrum, and the cost for these services is already included. Managed Storage Pricing Amazon Redshift charges for the data stored in a managed storage at a specific rate per GB-month. Its usage is calculated on an hourly basis as a function of the total amount of data and starts as low as $0.024 USD per GB with the RA3 node. The cost of a managed storage also varies according to the particular AWS region in which the data is stored. For example, consider the cost of a managed storage pricing where 100TB of data is stored with an RA3 node type for thirty days in the US East region, where the cost is $0.024 USD per GB-month. The total usage for thirty days in GB-hours is as follows: 100TB × 1024GB/TB (converting TB to GB) × 30 days × 24 hours/day = 73,728,000 GB-hours Then you can convert GB-hours to GB-months: 73,728,000 GB-hours / (24 × 30) hours per month = 102,400 GB-months Finally, you can calculate the total cost of 102,400 GB-months at $0.024 USD/GB-month in the US East region: 102,400 GB-months × $0.024 USD = $2,457.60 USD Spectrum Pricing With Amazon Redshift Spectrum, users can run SQL queries directly on the data in the S3 buckets. Here, the cost is based on the number of bytes scanned by the Spectrum utility. The pricing of Redshift Spectrum is $5 USD per terabyte of data scanned. Concurrency Scaling Pricing With Concurrency Scaling, Amazon Redshift can be scaled to multiple concurrent users and queries. For every twenty-four hours that your main cluster is live, you accrue a one-hour credit. Any additional usage is charged on a per-second, on-demand rate that depends on the number of types of nodes in the main cluster. Reserved Instance Pricing Reserved instances are designated for stable production workloads and are less expensive than clusters run on an on-demand basis. Significant cost savings can be achieved through long-term usage and commitment to Amazon Redshift in the span of a few years. Pricing for reserved instances can either be paid all up front, partially up front, or monthly over the course of a year with no up-front charges. Amazon Redshift Cost Optimization Considerations Before you begin using Amazon Redshift, you need to be aware of your current costs. AWS Cost ExplorerThe AWS Pricing Calculator provides a configurable tool to estimate the cost of using Amazon Redshift. For instance, the annual cost of one node of the DC2.8xlarge instance in the US East (Ohio) region on an on-demand basis is as follows: 1 instance × $4.80 USD hourly × 730 hours in a month × 12 months = $42,048 USD The cost for the same Amazon Redshift configuration for a reserved instance for a one-year term paid up front is $27,640 USD. AWS Tags Using AWS cost allocation tags can help you decode and manage your AWS costs. Tagsenable AWS resources to be labeled in the form of key-value pairs and can include various types, like technical, business, security, and automation. Once the tags are activated in the Billing and Cost Management console, a cost allocation report can be generated based on the specific resources tagged. Tags can be user-defined or AWS-generated. Amazon Redshift Cost Optimization Optimizing Amazon Redshift costs comes down to effective planning, prudent usage and allocation of resources, and regular monitoring of the usage and associated costs. Optimizing Queries The analytical queries made on the data stored in Amazon Redshift can be optimized to run more efficiently. Queries can be compute-intensive, can be storage-intensive, or can take a long time to execute. There are a number of query tuning techniques that can be used to optimize your queries. Tables with skewed data or missing statistics, and queries with nested loops and long wait times, typically affect query performance and can be improved as illustrated in this AWS developer guide. Here is a commonly used weak query that selects all the columns in a table: SELECT * FROM USERS The previous query can be very inefficient and slow if the table consists of thousands of columns, especially if only a few columns are relevant for the necessary analysis. This query can be optimized by specifying and retrieving the exact column names like the following: SELECT Firstname, Lastname, DOB FROM USERS Cluster Limits and Quotas Usage limits on Amazon Redshift clusters can be programmed using the AWS Command Line Interface (CLI) tool. Limits can be imposed on concurrency scaling in terms of time and spectrum in terms of data scanned. Daily, weekly, or monthly periods can be used. A number of limits and quotas are defined for Redshift resources that can also be applied to constrain the overall costs associated with Redshift. Data Type Amazon Redshift costs can also be managed by storing data in a compressed, partitioned, and columnar data format, like Apache Parquet, since fewer data is scanned. Conclusion Amazon Redshift is a powerful and cost-effective cloud-native data warehouse that provides scalable and performant data analytics and processing capabilities. It also comes with a serverless configuration that allows any data stakeholder to run data queries without the need to provision and manage the data warehouse infrastructure. Amazon Redshift has multiple aspects affecting its pricing, including on-demand or reserved capabilities, serverless, managed storage pricing, Redshift Spectrum pricing, concurrency scaling pricing, and reserved instance pricing. Keeping on top of the various Amazon Redshift costs is not straightforward but can be made easier by AWS cost monitoring tools, like CloudForecast. CloudForecast helps manage AWS costs through daily cost management reports, monthly financial reports, untagged AWS resources discovery, and idle and underutilized resources visibility for cost-saving opportunities. Related blog Published by CloudForecast Introduction

Companies are increasingly moving their production code to serverless functions using AWS Lambda, which has gained popularity for its better code maintenance, low-cost hosting charges, and automatically scaled and optimized performance. But without careful oversight, Lambda can become an expensive choice for your project. Lambda, offered by market-leading AWS, offers many benefits. Lambda is one example of serverless functions, or single-purpose, programmatic functions hosted and maintained by cloud providers like AWS, Azure, or GCP to ensure near-perfect runtime and scaling to any incoming network request volume. Companies can use Lambda, an event-driven compute service, to run any type of application or backend service without worrying about provisioning or managing servers. Lambda adapts to a variety of use cases across startups and enterprises alike. It can process data at scale, run interactive web and mobile backend services, enable powerful machine learning models, and build in-house event-driven applications. It also specifies limits for the amount of compute and storage resources used to run and store serverless functions. These limits apply to a number of resources, such as the number of concurrent executions; storage for uploaded functions as well as quotas for function configuration; deployment and execution parameters like memory allocation; timeout; environment variables; layers; and burst concurrency. The key to using Lambda is keeping your costs in check. This article will review Lambda’s pricing structure to show how costs can be efficiently managed without compromising on operational excellence and execution of Lambda functions. It will also discuss tools like CloudForecast that can help engineering teams monitor and reduce their serverless computing costs on AWS. Understanding AWS Lambda Pricing AWS Lambda pricing is based on the amount of memory allocated to the serverless function and the amount of time the code runs, rounded to the nearest millisecond. The key variables that determine Lambda costs are the type of architecture, the number of requests, the time frame for which the requests apply, the duration of each request (in milliseconds), and the amount of memory allocated to the Lambda function. Each Lambda request starts when code executes in response to an event trigger from services like Amazon’s Simple Notification Service or calls from Amazon API Gateway or via the AWS SDK. The cost for each compute and storage resource is calculated depending on the function configuration. AWS offers a free tier that allows one million free requests per month and 400,000 GB-seconds of compute time per month powered by x86 and Graviton2 processors. It also offers a flexible pricing model called the Compute Savings Plan, based on guaranteed usage (measured in dollars per hour) for a one- or three-year term. AWS Lambda does offer an attractive feature called Provisioned Concurrency that enables greater control over start-up latency when Lambda functions are triggered. Provisioned concurrency solves the problem of variable start-up latency when a Lambda service is triggered on demand and scales up to meet the needs of the application workloads. This overhead in starting a Lambda function is referred to as cold start, and the magnitude of this problem is a function of the time taken to set up the execution environment and the duration for the code to be initialized. As illustrated in this official AWS example, with provisioned concurrency enabled, the percentage of requests served within a given time remains fairly constant—especially for the slowest five percent of the requests—in comparison to a scenario with provisioned concurrency disabled. At scale, this can have a massive impact not only on the costs but also on the user experience. While the first factor is controlled by AWS, the second factor falls to the developer. The code initialization duration is predominantly responsible for cold start latency. Provisioned concurrency solves for cold start by enabling Lambda functions to be initialized for high workloads in milliseconds. AWS provides a pricing calculator to estimate the cost of using Lambda for your applications. The below estimate provides pricing calculations for a sample application with the following settings:

The same pricing calculator can also provide an estimate for provisioned concurrency. In this case, in addition to the above parameters, the cost is a function of the amount of concurrency specified and the period of time the configuration is active. Controlling AWS Lambda Costs AWS Lambda does offer options for controlling costs, but as the above example showed, the cost of function calls can quickly scale up as part of the organizational application workload. If the configuration is not carefully monitored and fine-tuned for current applications, Lambda can become prohibitively expensive. You can keep AWS Lambda costs down by focusing on three important factors:

The cost of a Lambda function invocation is multiplied by its execution time and memory size, so reducing either factor by even a small amount can have a significant impact on billable costs. It’s important to ensure you have the correct configuration. Periodic monitoring of the actual values of the memory size and the number and duration of function calls can help confirm whether the current configuration is fine-tuned for the current workload. AWS Lambda logs are ingested into Amazon CloudWatch, so mining these logs can help optimize the configuration and the costs. External tools like CloudForecast can also monitor usage and costs. Avoiding high maximum execution time also helps save costs. It’s common to have a buffer of execution time beyond what’s specified, but the additional costs incurred by Lambda functions add up, making it prudent to change the value of the “duration of each request” parameter as needed. Lambda Step Functions can also help manage costs. Step functions are state machines with a visual workflow that allow developers to coordinate different tasks like calling various Lambda functions. Using step functions is a more efficient way to poll for the status of tasks. Typically, long polling increases the costs of Lambda functions as they are waiting idle, and step functions help alleviate the total costs based on the number of state transitions to execute the application, instead of the execution time of a workflow. Another tactical method to control Lambda costs is to evaluate whether your application can be run asynchronously. Running async workloads prevents idle downtime in which the AWS Lambda functions wait for external applications to complete. If the overall architecture can be analyzed for idle instances and reconfigured for asynchronous execution, the costs of Lambda functions can be drastically reduced. The frequency at which Lambda functions are invoked can also impact the usage and costs. Where applications like Kinesis are used as a Lambda function trigger, increasing the batch size can reduce the frequency at which the Lambda function needs to be invoked, thus reducing the total number of executions. Writing optimized production code always helps, and its lower execution time can reduce Lambda costs. You can, for instance, record and analyze the Duration metric in CloudWatch for slow execution times. For some applications, EC2 spot instances may be cheaper and more effective than Lambda functions. This is especially true for an application architecture in which the traffic is predictable and sustained, making a reliable EC2 spot instance a more suitable alternative. Conclusion AWS Lambda and serverless functions have had a tremendous impact on the efficient execution of software, data, and machine learning applications in the cloud. Lambda can help you achieve savings on your engineering costs, but it’s possible to reduce your costs even more by optimizing the configuration of your applications and fine-tuning your resources. Doing this work manually can require careful logging and monitoring of your application in production settings. Instead, you can use tools to automate and dynamically adjust Lambda function settings to reduce costs in a more cost- and time-efficient manner. One of those tools is CloudForecast, which can manage and optimize the cost of using AWS services like Lambda. CloudForecast provides an out-of-the-box solution for engineering teams to monitor their monthly budget and move toward a more responsible use of Lambda functions. Its detailed reports suggest ways to reduce AWS costs, and it can also provide reports for your finance and accounting teams. To learn more about how CloudForecast can help with your AWS Lambda costs, check its official blog. Related blog Data science teams are an integral part of early-stage or growth-stage start-ups as midlevel and enterprise companies. A data science team can include a wide range of roles that take care of the end-to-end machine learning lifecycle from project conceptualization to execution, delivery, and monitoring:

The manager of a data science team in an enterprise organization has multiple responsibilities, including the following:

As the data science manager, it’s critical to have a structured, efficient hiring process, especially in a highly competitive job market where the demand outstrips the supply of data science and machine learning talent. A transparent, thoughtful, and open hiring process sends a strong signal to prospective candidates about the intent and culture of both the data science team and the company, and can make your company a stronger choice when the candidates are selecting an offer. In this blog, you’ll learn about key aspects of the process of hiring a top-class data science team. You’ll dive into the process of recruitment, interviewing, and evaluating candidates to learn how to find the ones who can help your business improve its data science capabilities. Benefits of an Efficient Hiring Process Recent events have accelerated organizations’ focus on digital and AI transformation, resulting in a very tight labor market when you’re looking for data sciencedigital skills, like machinelike data science and machine learning, statistics, and programming. A structured, efficient hiring process enables teams to move faster, make better decisions, and ensure a good experience for the candidates. Even if candidates don’t get an offer, a positive experience interacting with the data science and the recruitment teams makes them more likely to share good feedback on platforms like Glassdoor, which might encourage others to interview at the company. Hiring Data Science Teams A good hiring process is a multistep process, and in this section, you’ll look at every step of the process in detail. Building a Funnel for Talent Depending on the size of the data science team, the hiring manager may have to assume the responsibility of reaching out to candidates and building a pipeline of talent. In larger organizations, managers can work with in-house recruiters or even third-party recruitment agencies to source talent. It’s important for the data science managers to clearly convey the requirements for the recruited candidates, such as the number of candidates desired and the profiles of those candidates. Candidate profiles might include things like previous experience, education or certifications, skill set or tech stack, and experience with specific use cases. Using these details, recruiters can then start their marketing, advertising, and outreach campaigns on platforms, like LinkedIn, Glassdoor, Twitter, HackerRank, and LeetCode. In several cases, recruiters may identify candidates who are a strong fit but who may not be on the job market or are not actively looking for new roles. A database of all such candidates ought to be maintained so that recruiters can proactively reach out to them at a more suitable time and reengage the candidates. Another trusted source of identifying good candidates is through employee referrals. An in-house employee referral program that incentivizes current employees to refer candidates from their network is often an effective way to attract the specific types of talent you’re looking for. The data science leader should also publicize their team’s work through channels, like conferences or workshops, company blogs, podcasts, media, and social media. By investing dedicated time and energy in building up the profile of the data science team, it’s more likely that candidates will reach out to your company seeking data science opportunities. When looking for a diverse set of talent, the search an be difficult as data science is a male dominated field. As a result, traditional recruiting paths will continue to reflect this bias. Reaching out and building relationships with groups such as Women in Data Science, can help broad the pipeline of talent you attract. Defining Roles and Responsibilities Good candidates are more likely to apply for roles that have a clear job description, including a list of potential data science use cases, a list of required skills and tech stack, and a summary of the day-to-day work, as well as insights into the interviewing process and time lines. Crafting specific, accurate job descriptions is a critical—if often overlooked—aspect of attracting candidates. The more information and clarity you provide up front, the more likely it is that candidates have sufficient information to decide if it’s a suitable role for them and if they should go ahead with the application or not. If you’re struggling with creating this, you can start with an existing job description template and then customize it in accordance with the needs of the team and company. It's also critical to not over populate a job description with every possible skill or experience you hope a candidate brings. That will narrow your potential applicant pool. Instead focus on those skills and experiences that are absolutely critical. The right candidate will be able to pick up other skills on the job. It can be useful for the job description to include links to any recent publications, blogs, or interviews by members of the data science team. These links provide additional details about the type of work your team does and also offer candidates a glimpse of other team members. Here are some job description templates for the different roles in a data science team: Interviewing process When compared to software engineering interviews, the interview process for data science roles is still very unstructured, and data science candidates are often uncertain about what the interview process involves. The professional position of data scientist has only existed for a little over a decade, and in that time, the role has evolved and transformed, resulting in even newer, more specialized roles, such as data engineer, machine learning engineer, applied scientist, research scientist, and product data scientist. Because of the diversity of roles that could be considered data science, it’s important for a data science manager to customize the interviewing process depending on the specific profile they’re seeking. Data scientists need to have expertise in multiple domains, and one or more second-round interviews can be tailored around these core skills: